The rise of LLM gateways: the control layer enterprise AI was missing

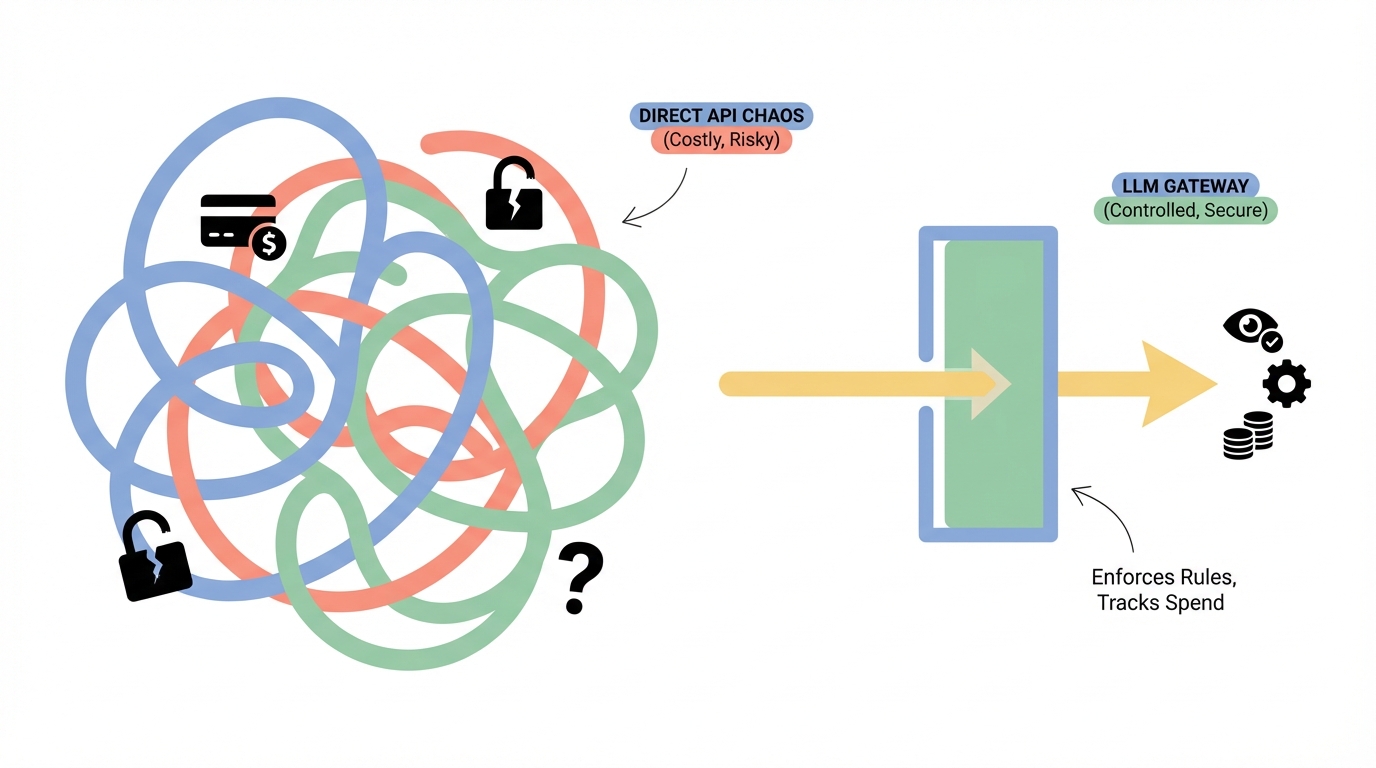

Every enterprise building with large language models hits the same wall. LLM gateways solve it by sitting between your applications and AI models, enforcing rules, tracking spending, and routing traff

Every enterprise building with large language models hits the same wall. The proof-of-concept works. The demo impresses the board. Then reality arrives. Costs spiral. Data leaks through prompts. Nobody knows which team is calling which model. There is no audit trail. Compliance falls apart.

This is the problem LLM gateways solve.

They sit between your applications and the AI models. Every prompt and every response passes through them. They enforce rules, track spending, log interactions, and route traffic intelligently. Think of them as the missing middleware layer for enterprise AI.

The market noticed. It grew from $400 million in 2023 to $3.9 billion in 2024. Gartner published its first Market Guide for AI Gateways in October 2025, projecting that 70% of software engineering teams building multi-model applications will use AI gateways by 2028. Only 25% used them in 2025.

This is not a niche category anymore. It is becoming core infrastructure.

What exactly is an LLM gateway?

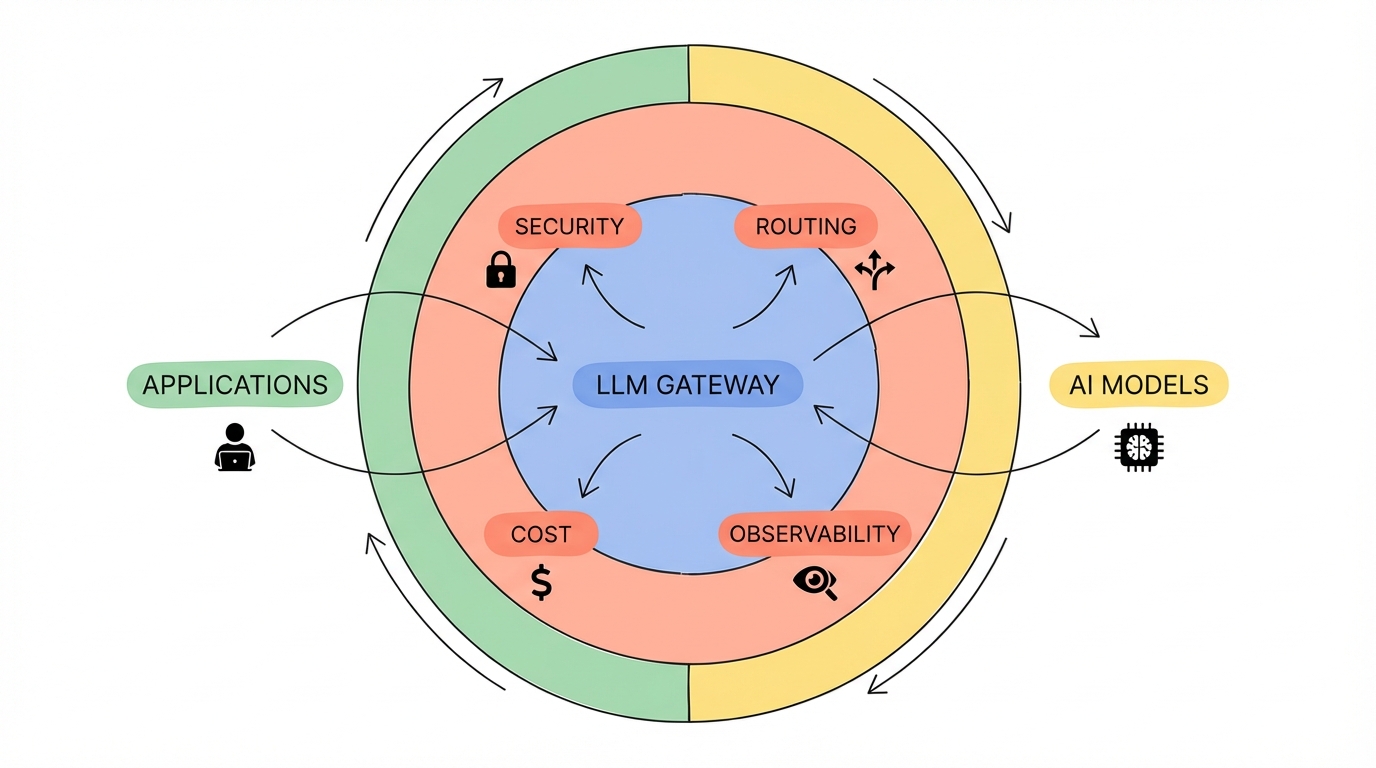

Gartner defines an AI gateway as middleware that sits between applications and AI services or models, managing security, observability, and cost optimisation.

In plain terms, it is a smart proxy. Your application sends its LLM request to the gateway instead of directly to OpenAI, Anthropic, or Google. The gateway then:

- Checks the request against your policies (is this user allowed to call this model?)

- Scans for sensitive data like PII before it leaves your network

- Routes the request to the best available model based on cost, latency, or capability

- Logs everything for audit and compliance

- Tracks token usage and cost per application, per team, per user

- Returns the response after checking it against your content safety rules

Without a gateway, each application team manages its own API keys, its own error handling, its own cost tracking, and its own compliance controls. That does not scale.

What the industry calls these products

The naming is not settled yet. You will see these products described as:

LLM Gateways — the most common term. Emphasises the routing and traffic management function. Used by Kosmoy, Kong, and Cloudflare.

AI Gateways — broader term that covers non-LLM AI services too. Gartner uses this label. Kong, TrueFoundry, and Cloudflare position here.

LLM Routers — emphasises intelligent model selection and failover. LiteLLM, OpenRouter, and Helicone use this framing.

AI Infrastructure Platforms — used by companies offering gateways as part of a larger stack including observability, prompt management, and deployment tooling. TrueFoundry and Portkey fit here.

LLM Proxy — the developer-friendly term. Helicone and Portkey sometimes use this.

AI Observability Platforms — where the gateway is secondary to monitoring and analytics. Helicone and Langfuse sit here.

GenAI Governance Platforms — the enterprise compliance framing. Kosmoy leans into this positioning heavily.

The lines blur constantly. Most products in this space offer some combination of routing, governance, observability, and cost management. The label they choose signals their primary buyer: "gateway" targets platform engineers, "governance" targets CIOs and compliance teams, "observability" targets developers.

The market landscape

Enterprise-first: Kosmoy

Kosmoy is a London-based company positioning itself as a GenAI governance and development platform. Its LLM Gateway is the core product, but it wraps that gateway inside a broader studio environment that includes no-code application building, RAG pipelines, vector database connectors, and a pre-built chat interface.

What makes Kosmoy distinctive is its governance-first approach. The gateway enforces EU AI Act compliance guardrails out of the box. It scans for PII leakage, toxic content, and prompt injection attacks before requests reach any model. Every interaction is logged for audit. This makes it a natural fit for regulated industries — banking, financial services, healthcare — where compliance is not optional.

Kosmoy supports models from OpenAI, Anthropic, AWS Bedrock, Azure AI Foundry, Google, Mistral, OpenRouter, and Hugging Face. Its LLM Router can dynamically select the cheapest model for a given prompt complexity, claiming up to 100x cost savings by leveraging price differences between models. It also offers failover routing and load balancing for reliability.

The platform can be deployed in a customer's own data centre or private cloud, which matters for organisations with data sovereignty requirements. Its Monitoring Hub tracks LLM fees per application, user feedback, token consumption, and error rates in real time.

For enterprises that need a complete, governed GenAI platform rather than just a routing layer, Kosmoy is worth evaluating. It trades the raw performance focus of developer-oriented gateways for a broader governance and application-building story.

The developer darlings

LiteLLM is the open-source workhorse. It provides a unified OpenAI-compatible API across 100+ LLM providers. Free to self-host. Strong budget management and rate limiting per user, team, or API key. The trade-off is setup complexity — you need YAML configuration and engineering time. Best for platform teams that want maximum control and have the bandwidth to maintain infrastructure.

Helicone is built in Rust for performance, adding roughly 50ms of latency. It started as an observability tool and grew into a full gateway. One-line integration (change your base URL and you are live). Zero markup pricing on self-hosted deployments. Strong caching that can cut API costs by 20-30%. Best for teams that prioritise monitoring and cost visibility alongside routing.

OpenRouter is the simplest option. A single API endpoint that routes to any model. Five-minute setup. The downside is a 5% markup on all requests, which compounds at scale. Limited customisation. Best for small teams or prototyping.

The enterprise heavyweights

Portkey positions as an enterprise AI gateway with support for 1600+ LLMs. It offers advanced guardrails, prompt versioning, virtual key management, and compliance controls including SOC2, HIPAA, and GDPR. Pricing starts at $49 per month. Best for large teams needing detailed control over routing behaviour and enterprise-grade security.

Kong AI Gateway extends Kong's mature API management platform to handle AI traffic. It benchmarks at over 200% faster than Portkey and 800% faster than LiteLLM in throughput tests. It brings enterprise API management patterns — authentication, rate limiting, consumer management — to LLM traffic. Best for organisations already using Kong for API management who want to unify their AI and API governance.

TrueFoundry offers what it calls an "AI Control Plane." It was recognised as a representative vendor in Gartner's Market Guide for AI Gateways. Its gateway adds roughly 3-4ms of latency and handles 350+ requests per second on a single vCPU. It bundles prompt versioning, model deployment, fine-tuning workflows, and Kubernetes integration. Best for teams that want full-stack LLMOps — not just a gateway but an entire AI infrastructure platform.

Cloudflare AI Gateway leverages Cloudflare's global edge network. Basic routing and caching with unified billing. Easy to set up if you are already a Cloudflare customer. Limited advanced features. Usually outgrown quickly by serious AI deployments.

The emerging challengers

Bifrost by Maxim AI claims to be 50x faster than LiteLLM with less than 11 microseconds of overhead. Built in Go. Zero-configuration deployment. Supports 15+ providers. Optimised for teams that need raw performance above all else.

LangDB positions as an enterprise AI gateway for securing, governing, and optimising AI traffic across 250+ LLMs via a unified API.

Why this category exists now

Three forces created this market.

Cost chaos. A single customer support agent handling 10,000 daily conversations can cost over $7,500 per month in API fees. Multiply that across an enterprise with dozens of AI applications and the CFO starts asking uncomfortable questions. Gateways provide the visibility and controls to answer those questions.

Regulation arrived. The EU AI Act is real. GDPR applies to data flowing through LLM prompts. Financial services regulators demand audit trails. Healthcare has HIPAA. Enterprises cannot send data to AI models without governance controls in place. Gateways enforce those controls at the infrastructure layer, not at the application layer where controls are fragile and inconsistent.

Multi-model is the reality. No enterprise uses a single LLM. Different tasks need different models. Costs vary wildly between providers. Models have outages. New models appear constantly. A gateway abstracts this complexity away from application teams. They call one endpoint. The gateway handles everything else.

What Gartner says matters

Based on Gartner's evaluation framework, enterprises should assess AI gateways on five dimensions:

Integration with existing systems. Can it work with your existing API gateways or service meshes without re-architecting everything?

Vendor innovation roadmap. Does it support emerging standards like Model Context Protocol (MCP) and AI-to-AI (A2A) communication? These matter as agentic AI scales.

Security and compliance strength. Can it safeguard credentials, block unsafe inputs, redact PII, and maintain audit trails?

Scalability and latency performance. Can it handle your throughput requirements without adding unacceptable latency?

Pricing alignment. Does the pricing model map to token consumption and your budgeting cycles? Some charge markups per request. Others charge platform fees. Others are free to self-host.

How to think about choosing

The decision depends on who you are and what you need most.

If you are a startup or small team: Start with Helicone or LiteLLM. Low overhead, fast integration, strong observability. No compliance bureaucracy to wade through.

If you are an enterprise in a regulated industry: Look at Kosmoy, Portkey, or TrueFoundry. You need governance, audit trails, PII protection, and compliance guardrails built into the infrastructure layer. Kosmoy's EU AI Act compliance and data sovereignty options make it particularly relevant for European enterprises.

If you already run Kong for API management: Kong AI Gateway is the natural extension. One platform for both API and AI traffic governance.

If performance is everything: Bifrost or TrueFoundry. Both are engineered for minimal latency at high throughput.

If you want a complete GenAI platform, not just a gateway: Kosmoy or TrueFoundry. Both bundle application building, RAG pipelines, and monitoring alongside the gateway layer.

What comes next

Gartner's framing is telling. They describe AI gateways as shifting from simple traffic routers to intelligent governance engines. The gateway is becoming the AI Control Plane — the layer that brings together reliability, accountability, and operational excellence for multi-model and multi-agent systems.

As agentic AI scales, gateways will need to handle not just human-to-model traffic but agent-to-agent communication. MCP support is already becoming a differentiator. The gateways that win will be the ones that evolve from routing requests to governing entire AI ecosystems.

The parallel is Kubernetes. Containers existed for years before Kubernetes became the control plane that made them enterprise-ready. LLMs exist now. The gateway is becoming their control plane.

For any enterprise serious about production AI, the question is no longer whether to use an LLM gateway. It is which one.

Want more insights?

Subscribe to get the latest articles delivered straight to your inbox.