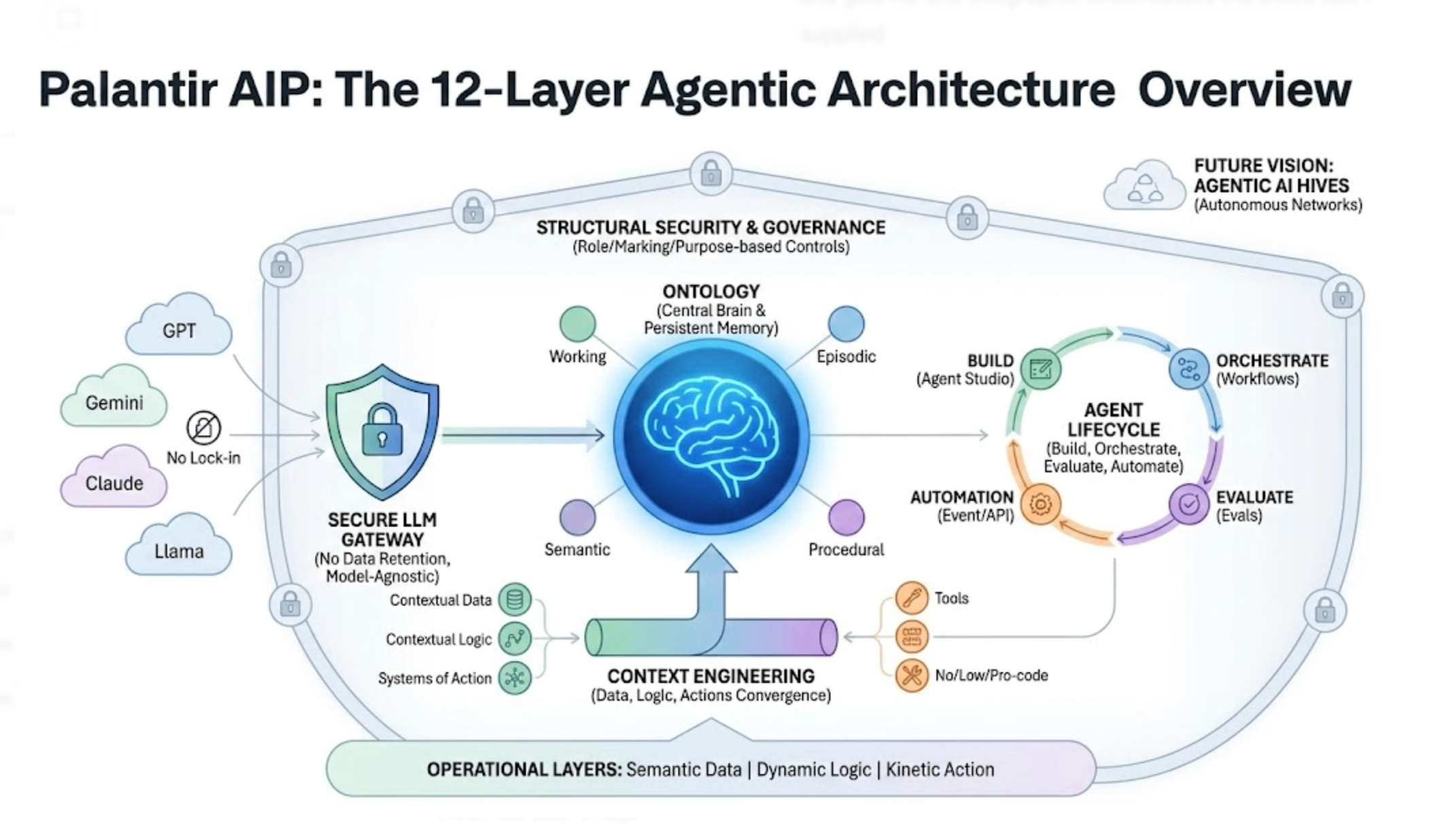

Palantir's 12-layer agentic architecture is the most ambitious enterprise AI blueprint yet

Most enterprise AI platforms bolt an LLM onto an existing data stack and call it "agentic." Palantir's AIP takes a fundamentally different approach: a 12-layer architecture where the Ontology — not th

Most enterprise AI platforms bolt an LLM onto an existing data stack and call it "agentic." Palantir's AIP takes a fundamentally different approach: a 12-layer architecture where the Ontology — not the language model — sits at the center. The result is a system where agents don't just answer questions. They query billions of objects, orchestrate thousands of actions, and operate under the same security governance as human employees.

With U.S. commercial revenue growing 137% year-over-year in Q4 2025 and 571 enterprise customers now on the platform, AIP is rapidly becoming the reference architecture for how large organizations think about deploying AI agents in production. This post breaks down what each layer does, why the Ontology-centric design matters, and what it means for the broader enterprise AI landscape.

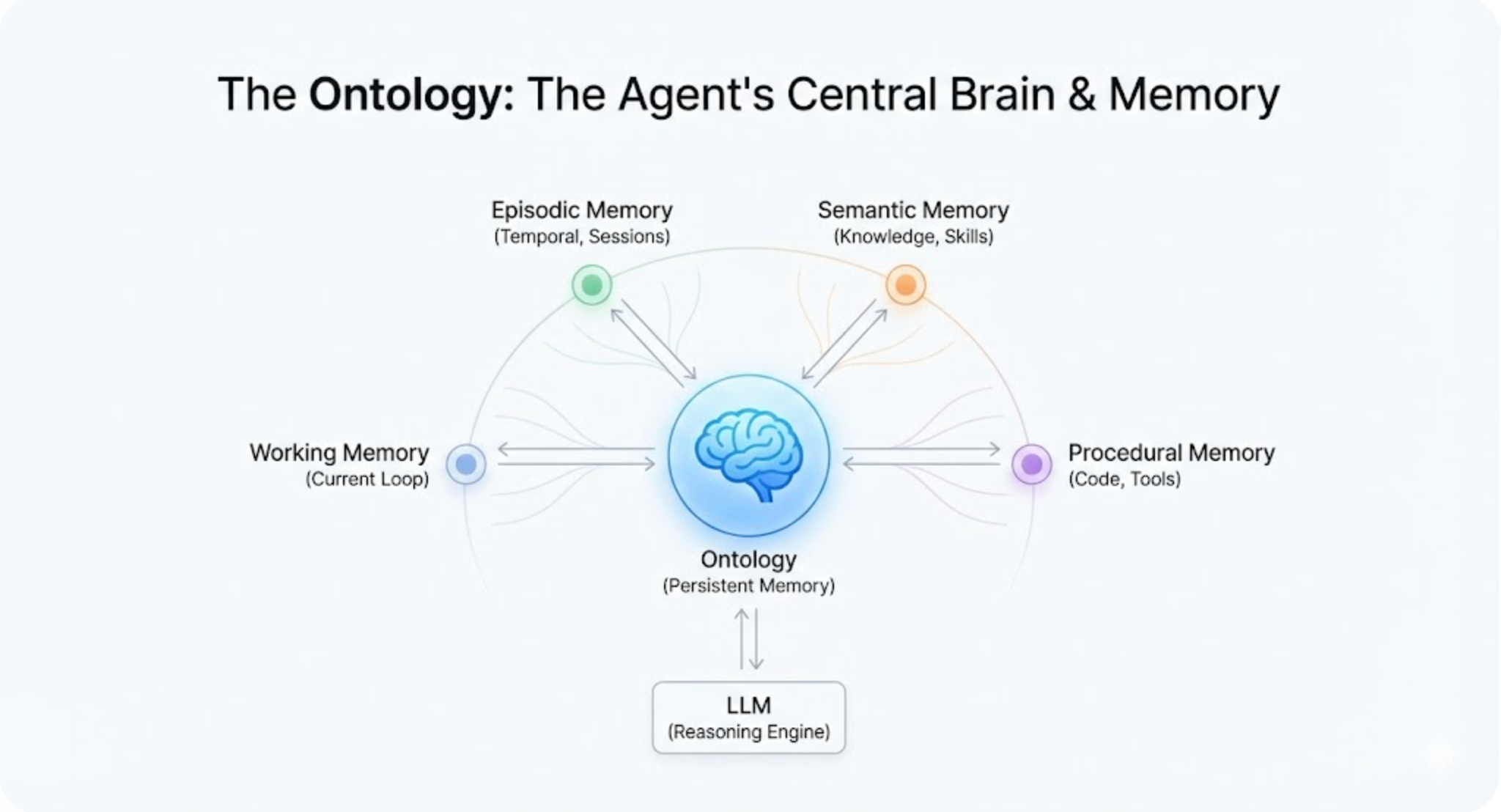

The Ontology is the agent's brain, not the LLM

Every agentic AI system needs memory. Most platforms solve this by stuffing context into an LLM's prompt window — a fundamentally limited approach. Palantir's architecture inverts this by making the Ontology the agent's persistent, queryable memory system.

The Ontology models enterprise processes into objects with clear business meaning: a "Customer," a "Work Order," a "Supply Chain Route." Properties, links, and actions map the relationships between them. LLMs and AIP agents can query and reason over this structure using natural language because the data already has semantic meaning baked in.

What makes this especially clever is how the Ontology implements four distinct memory types drawn from cognitive science:

Working memory holds information relevant to the current agent loop — the prompt, working variables, and intermediate state needed to complete the immediate task. Episodic memory stores relevant information across execution sessions, with temporal markers that inform subsequent operations. Semantic memory represents learned knowledge and skills, organized categorically rather than temporally. Procedural memory is code designed to augment the model's implicit knowledge, driving stable execution and reliable tool usage.

By using the Ontology as a unified memory system, AIP ensures a consistent surface area across all four memory types for both human and agentic users. This is the architectural decision that separates Palantir from platforms where agents are essentially stateless between invocations.

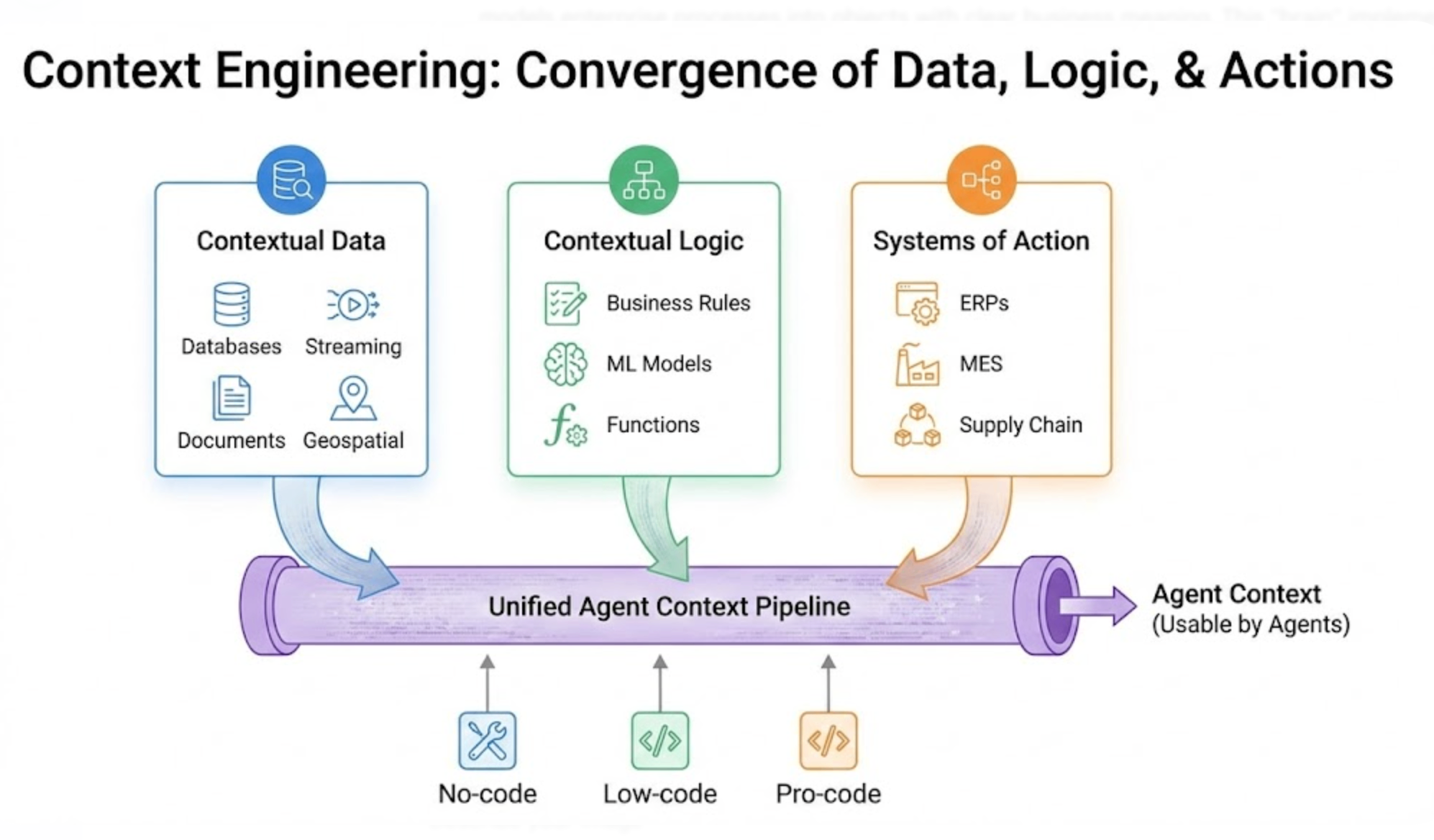

Context engineering: where data, logic, and actions converge

Layer 3 of the architecture — Context Engineering — is where raw enterprise data becomes usable agent context. This isn't just about retrieval-augmented generation. It's a full pipeline for integrating contextual data, contextual logic, and systems of action.

Contextual Data flows through MMDP (Multimodal Data Pipelines) supporting real-time, pro-code, and no-code integration paths. Think structured databases, streaming telemetry, documents, and geospatial data all feeding into the same pipeline.

Contextual Logic brings in external logic, model building, and functions — the business rules and ML models that constrain and guide agent reasoning. This is where you encode that "a maintenance order requires manager approval above $50K" or "predicted failure probability above 0.7 triggers an alert."

Systems of Action connect event-driven, streaming, and edge integration patterns. These are the write paths — the mechanisms through which agent decisions flow back into operational systems like ERPs, MES platforms, and supply chain management tools.

The key design principle: context engineering equips developers with no-, low-, and pro-code tools. A data engineer can build batch pipelines. A business analyst can configure no-code data flows. A ML engineer can write custom functions. All three produce context that agents consume through the same interface.

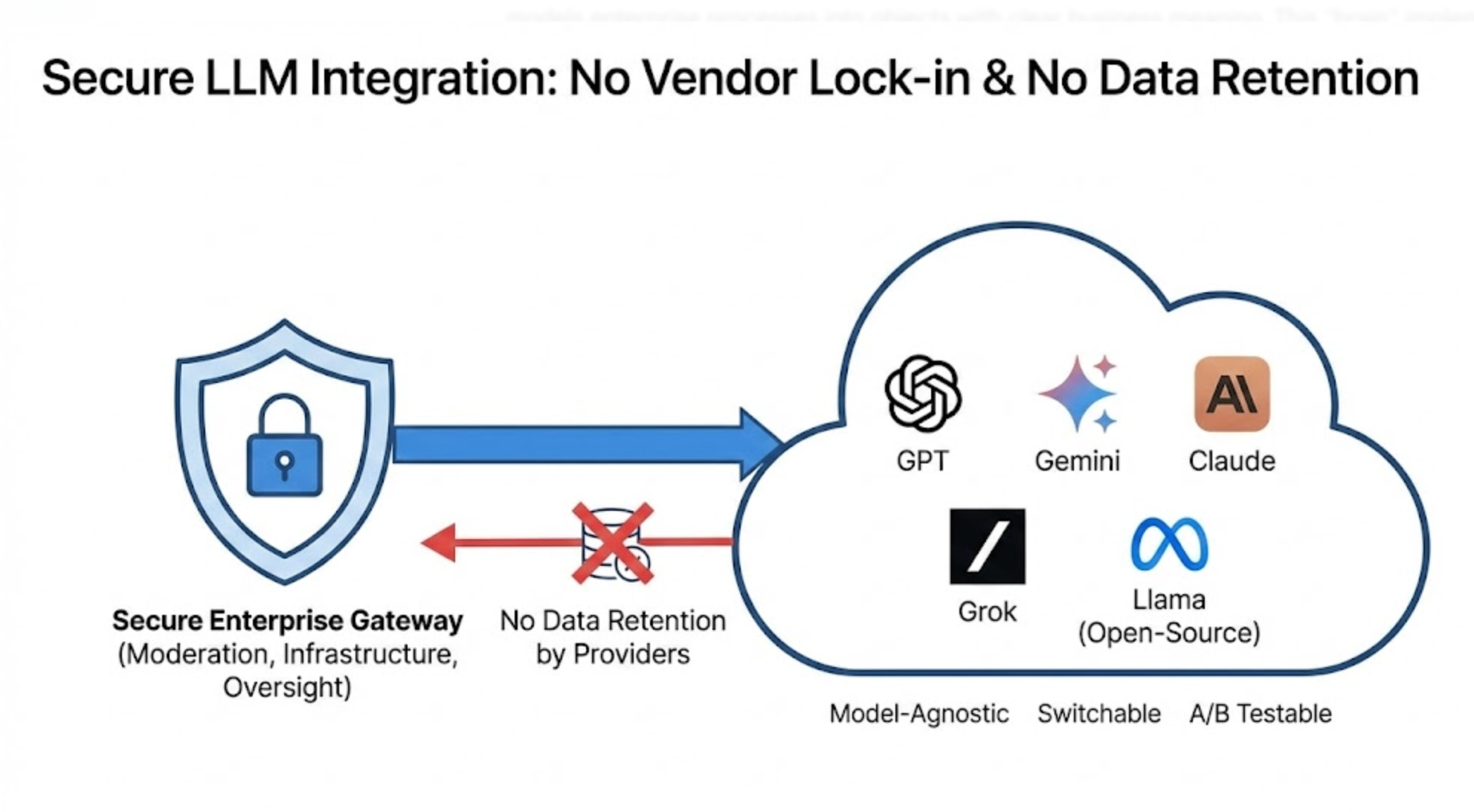

Secure LLM integration without vendor lock-in

Layer 1 handles something every enterprise worries about: how do you use commercial LLMs without sending your proprietary data to third-party providers who might retain it for training?

AIP provides secure access to the full range of commercial models — GPT, Gemini, Claude, Grok — alongside open-source models like Llama, all through Palantir-managed infrastructure. The critical guarantee: no transmitted data is retained by third-party providers.

The architecture supports three integration tiers. Moderation handles PII obfuscation, content detection, and safety filtering before any data reaches an LLM. Infrastructure provides smart caching and dynamic retry logic to manage cost and reliability. Validation and Oversight covers model enablement, usage tracking, and rate limiting.

The platform is explicitly model-agnostic. Commercial providers (OpenAI, Google, Anthropic, xAI) and open-source models (Meta's Llama) plug into the same interface. Organizations can run custom integrations or bring their own models. This isn't just a checkbox feature — it means enterprises can switch models per use case, A/B test providers, or fall back gracefully when a provider has an outage.

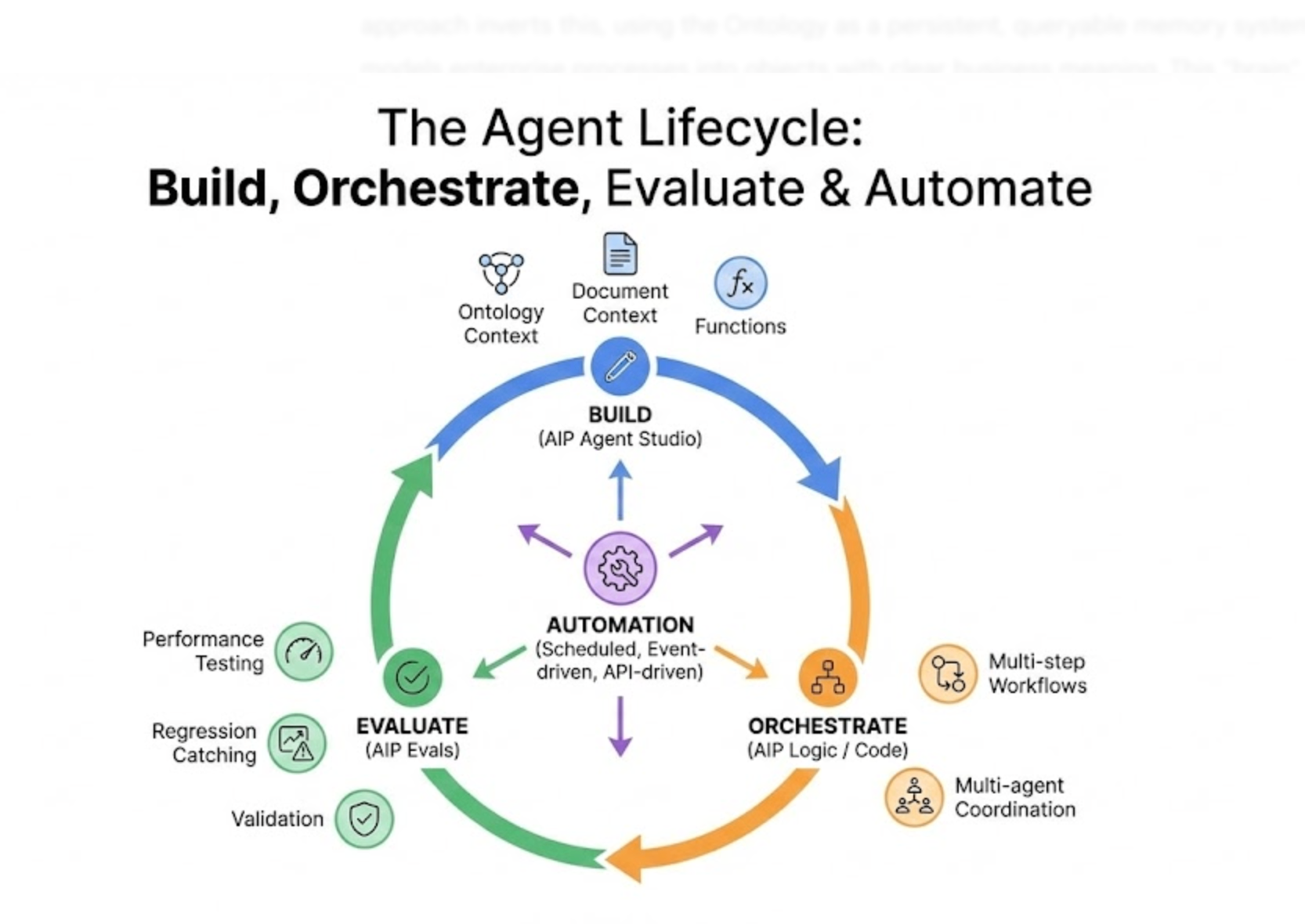

The agent lifecycle: build, orchestrate, evaluate

Layers 7 and 8 define how agents actually get built and deployed. The lifecycle has three phases:

Agent Building uses AIP Agent Studio, where agents are equipped with enterprise-specific information and tools. Agents can consume Ontology context (querying real business objects), document context (searching unstructured knowledge bases), or custom function-backed context. Construction supports the full spectrum from no-code visual builders to pro-code workspaces.

Agent Orchestration manages how agents coordinate in production. Durable orchestrations — configured through AIP Logic or Code Workspaces — handle the choreography of multi-step, multi-agent workflows. This isn't simple chaining. It's the kind of orchestration where an agent monitoring inventory levels triggers a procurement agent, which checks budget constraints through a finance agent, which escalates to a human approver if the amount exceeds thresholds.

Evaluation Suites provide integrated testing frameworks for comparing agent performance, catching regressions, and validating that agent behavior meets enterprise standards before deployment. AIP Evals tests and validates AI outputs systematically — something most enterprise AI deployments still handle manually.

On the automation side (Layer 8), AIP supports three patterns: scheduled automation for recurring tasks, event-driven automation triggered by data changes or condition matches, and API-driven automation for programmatic integration with external systems.

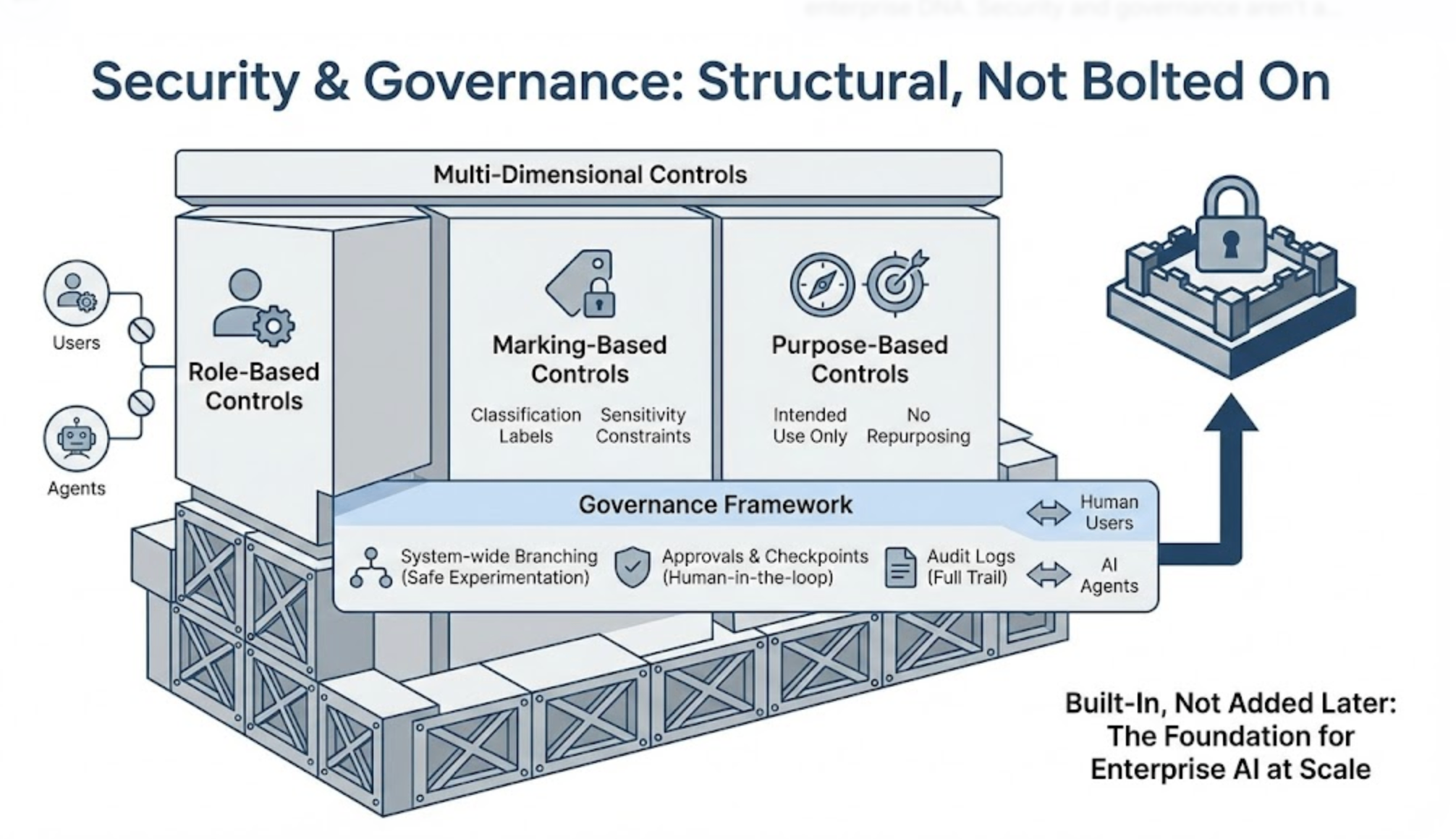

Security and governance aren't bolted on — they're structural

Layer 6 might be the most telling indicator of Palantir's enterprise DNA. Security and governance aren't a sidebar feature. They're woven into every layer of the architecture.

The controls operate across multiple dimensions: role-based controls restrict what users and agents can access based on their organizational role. Marking-based controls apply classification labels to data, constraining access based on sensitivity. Purpose-based controls ensure data is only used for its intended purpose — not repurposed by an agent for an unrelated task.

System-wide branching enables safe experimentation. Approvals and checkpoints enforce human-in-the-loop governance where business rules require it. Every agent operation is subject to the same governance framework that applies to human users — the same audit logs, the same access policies, the same approval workflows.

This is where Palantir's two decades of government and defense work pays off. While most AI platforms treat security as a feature to add later, AIP treats it as a structural requirement. When you're deploying agents that can query billions of objects and orchestrate thousands of actions, "move fast and break things" isn't an option.

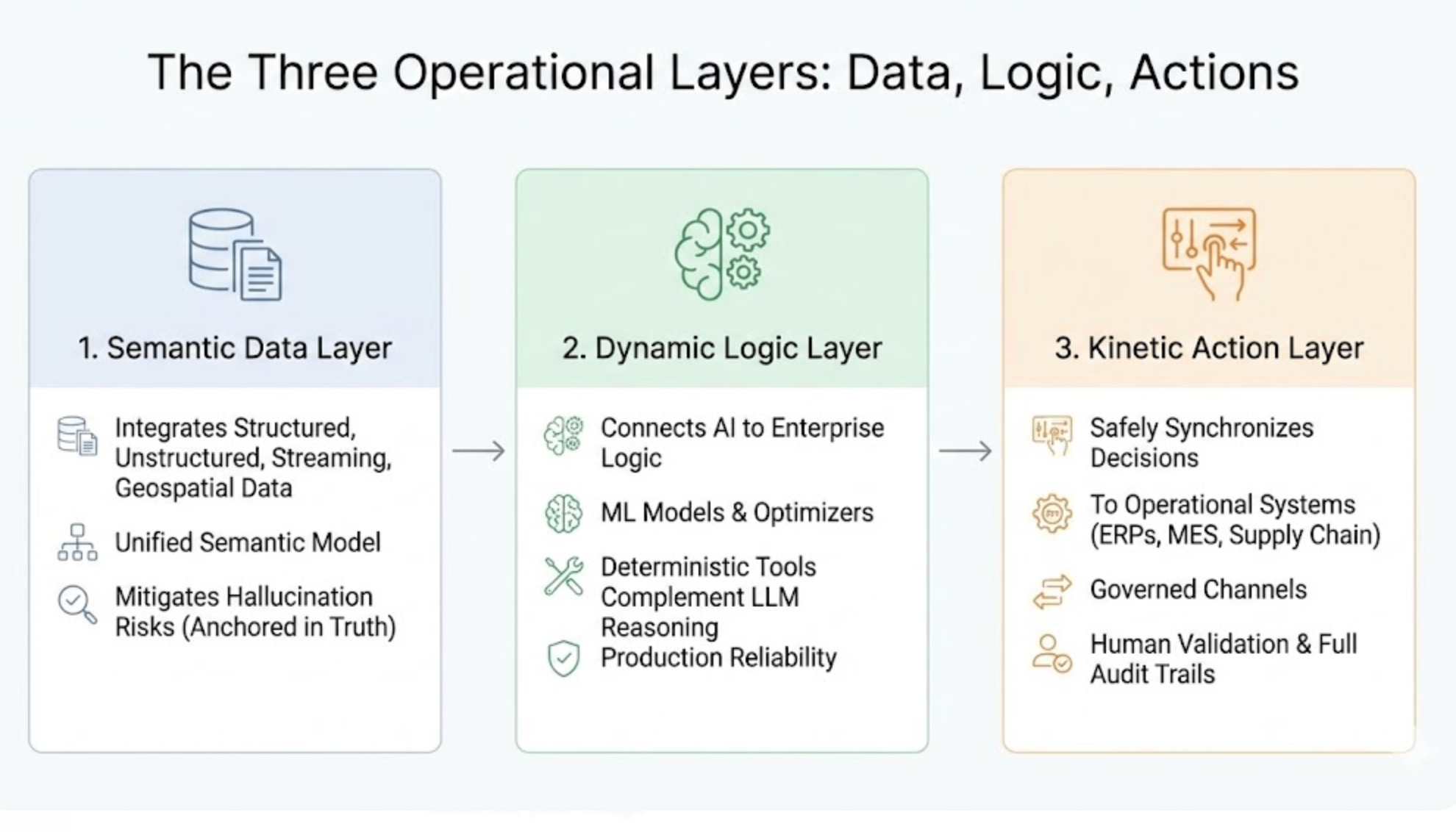

The three operational layers: data, logic, actions

The platform page describes three layers that map cleanly to how enterprises actually think about AI integration:

The Semantic Data Layer integrates structured, unstructured, streaming, and geospatial data into a unified semantic model. By anchoring AI decisions in operational truth, it mitigates hallucination risks — you can't hallucinate a customer order number when the agent is querying a live Ontology object.

The Dynamic Logic Layer connects AI to enterprise business logic, ML models, optimizers, and computational assets distributed across environments. This is what allows deterministic tools to complement probabilistic LLM reasoning — a critical pattern for production reliability.

The Kinetic Action Layer safely synchronizes AI-driven decisions back to operational systems. ERPs, MES platforms, supply chain management tools, and edge platforms all receive agent actions through governed channels with human validation capabilities and full audit trails.

What the numbers say about adoption

The growth metrics tell the adoption story more clearly than any architecture diagram. Palantir raised its full-year 2025 revenue guidance to $4.4 billion, with 2026 projections hitting $7.18 billion — roughly 61% year-over-year growth. The Rule of 40 score reached 127% in Q4 2025, a metric that makes most SaaS companies look pedestrian.

More telling is the deal composition. In Q3 2025, Palantir closed 204 deals worth more than $1 million and 53 deals exceeding $10 million. Total Contract Value reached a record $2.76 billion, up 151% year-over-year. Net dollar retention hit 134%, meaning existing customers are expanding their usage significantly.

The customer count crossed 571 U.S. commercial enterprises, growing 49% year-over-year. Forrester recognized Palantir as a Leader in AI/ML Platforms. Dresner Advisory Services ranked them #1 in AI and Data Science.

The Rackspace partnership announced in February 2026 aims to compress deployment timelines from months or years to weeks — addressing the biggest practical barrier to enterprise AI adoption.

Where this architecture is heading

The 2026 innovation Palantir is betting on is "Agentic AI Hives" — autonomous agent networks that handle complex supply chain disruptions without human intervention. This represents a philosophical shift from decision-support to decision-execution.

The 12-layer architecture makes this evolution possible in ways that simpler platforms can't match. When your agents share a unified Ontology memory, operate under consistent governance, and connect through managed orchestration, scaling from individual agents to coordinated agent networks is an engineering problem, not an architectural rewrite.

For engineering teams evaluating enterprise AI platforms, Palantir's architecture raises the bar on what "production-ready" means. It's not enough to deploy an agent that can answer questions. Production-ready means the agent operates under the same security controls as your employees, writes actions back to operational systems through governed channels, and maintains auditable memory across sessions.

The question isn't whether this level of infrastructure is necessary. It's whether your organization can afford to deploy agents without it.

Want more insights?

Subscribe to get the latest articles delivered straight to your inbox.