OpenClaw and the lobster-shaped future of AI agents

175,000 GitHub stars in under two weeks. OpenClaw represents the clearest signal yet that personal AI agents have crossed from experimental curiosity into daily-driver territory.

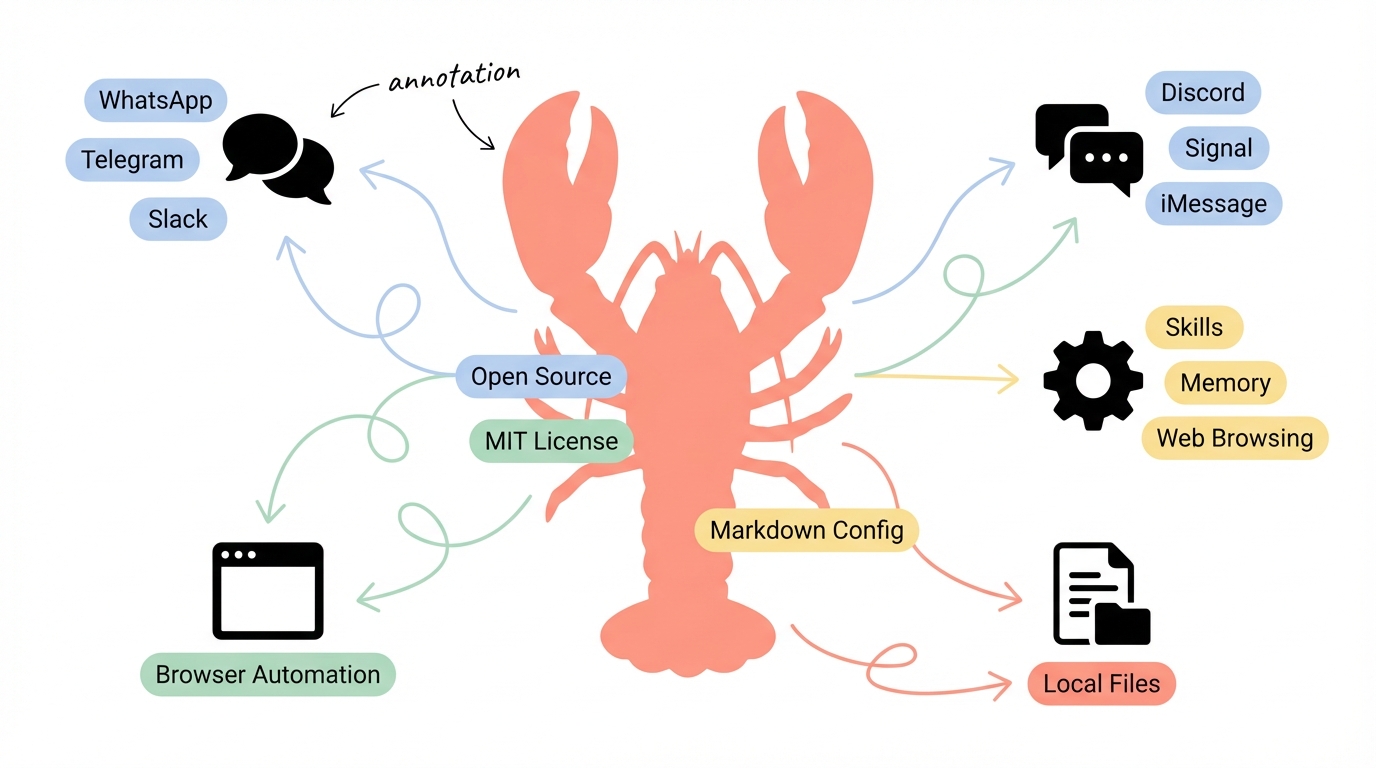

175,000 GitHub stars in under two weeks. An MIT-licensed codebase that connects to WhatsApp, Telegram, Slack, Discord, Signal, and iMessage. A configuration-first agent framework where you define agents in markdown files, not Python classes. OpenClaw didn't just launch — it detonated.

Created by Peter Steinberger (founder of PSPDFKit), OpenClaw represents something bigger than another open-source project going viral. It's the clearest signal yet that personal AI agents — software that doesn't just answer questions but actually does things on your behalf — have crossed from experimental curiosity into daily-driver territory. Gartner predicts 40% of enterprise applications will embed AI agents by the end of 2026, up from less than 5% in 2025. OpenClaw is what happens when that trend meets open source.

But the explosion of interest comes with real questions about security, autonomy, and what it means to hand shell access to an LLM. Let's dig in.

What OpenClaw actually is (and isn't)

OpenClaw is not a chatbot. It's not another wrapper around GPT. It's a self-hosted AI agent platform — a long-running Node.js process called the Gateway that handles channel connections, session state, agent loops, model calls, tool execution, and memory persistence.

The architecture is straightforward. You install OpenClaw globally (npm install -g openclaw@latest), run the onboarding wizard, and connect it to the messaging platforms you already use. The Gateway maintains a WebSocket control plane at ws://127.0.0.1:18789, and clients — CLI, macOS menu bar app, WebChat, iOS/Android apps — connect to it. The agent can then act on your behalf: searching the web, controlling a browser, managing files, sending messages, running shell commands, and automating workflows on a configurable schedule.

Three design decisions make OpenClaw distinct:

Local-first memory. Your data stays as Markdown files on your disk. No cloud database, no vendor lock-in. Context persists across conversations without sending your history to a third party.

Configuration over code. Agents are defined in markdown files. Skills — the units of capability — are portable and community-extensible. You don't write pipeline code; you configure files. There are over 100 preconfigured AgentSkills out of the box for everything from shell commands to web automation.

Model-agnostic. Bring your own API key for Claude, GPT, Gemini, or run local models entirely on your own infrastructure. The recommended model is Claude Opus 4.6 for its long-context strength and prompt-injection resistance, but nothing locks you in.

Getting started in 15 minutes

The setup is deliberately frictionless. Here's what it actually takes:

# Install (requires Node.js >= 22)

npm install -g openclaw@latest

# Run the onboarding wizard

openclaw onboard --install-daemon

The wizard walks you through three things: gateway configuration, workspace setup, and channel connection. For your first channel, Telegram is the easiest — search for @BotFather, create a new bot, paste the token into OpenClaw's prompt, and you're live.

From there, the interaction model is conversational. Ask your agent to research a topic, and it searches the web using Brave Search API, fetches pages, and synthesizes findings into a document saved to your local filesystem. Ask it to schedule a recurring task, and the heartbeat scheduler wakes it at configurable intervals to execute without prompting.

The typical cost of running OpenClaw with cloud models is $3-10 per month in API usage. The software itself is free.

One practical tip: start with a single channel and a single use case — like daily briefings or research summaries — before layering on complexity. OpenClaw's power comes from composing capabilities, and understanding how the agent reasons about tasks helps you write better instructions.

The skill system: where OpenClaw gets interesting

Skills are OpenClaw's extension mechanism, and they're where the community has really run with the project. A skill is a self-contained package that gives the agent a new capability — anything from "search Hacker News" to "manage my Kubernetes cluster."

Skills come in three tiers:

- Bundled skills ship with OpenClaw (web search, browser control, file operations)

- Managed skills are curated and installable from a central hub

- Workspace skills are custom skills you write for your own workflows

The portable format means skills can be shared, and the community has responded. Projects like Antfarm layer multi-agent workflows on top — giving you a team of specialized agents (planner, developer, verifier, tester, reviewer) that collaborate on tasks.

This is where OpenClaw mirrors a broader industry trend. Gartner reported a 1,445% surge in multi-agent system inquiries from Q1 2024 to Q2 2025. The shift isn't toward single all-purpose agents but toward an "orchestrated workforce" — a primary orchestrator directing smaller, expert agents. OpenClaw's skill system is essentially that pattern made accessible.

The security question nobody wants to talk about

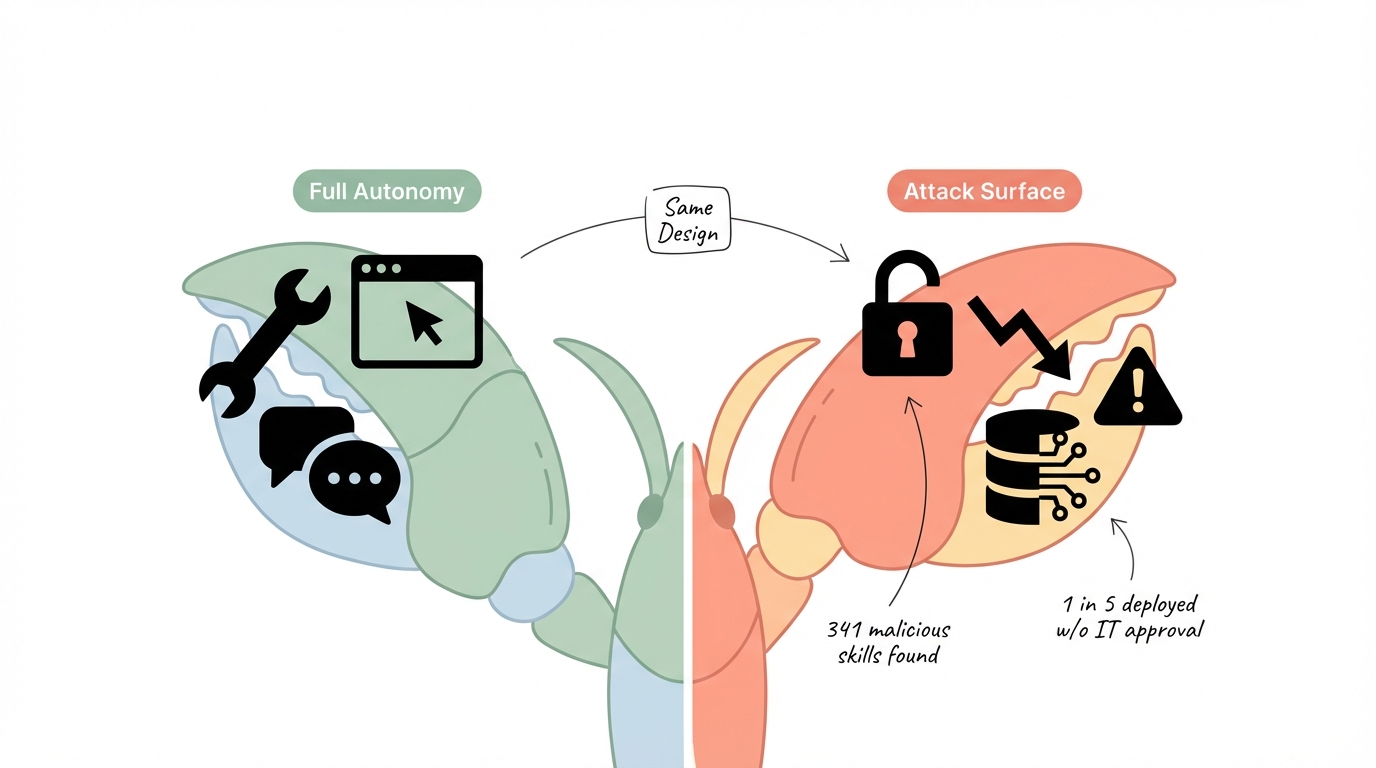

Here's the tension: OpenClaw's greatest strength — unrestricted configurability — is also its most significant risk.

Trend Micro published a detailed security analysis in February 2026 that should be required reading for anyone deploying OpenClaw. Their researchers found 341 malicious skills in OpenClaw's skill hub. They documented prompt injection vectors where "an attacker could embed a malicious prompt within a webpage or hide it inside a document" to manipulate agent behavior. They found that persistent memory combined with agent-to-agent communication creates data exfiltration pathways where "a single manipulation could propagate across external systems."

The numbers are sobering. One in five organizations deployed OpenClaw without IT approval. Misconfigurations have already exposed millions of records — API tokens, email addresses, private messages, and credentials.

OpenClaw enforces minimal guardrails compared to managed alternatives. There's no mandatory human-in-the-loop for sensitive actions, no approval mechanism required for financial transactions, and unrestricted access to external services and file systems. Users can configure safety rails, but the defaults lean toward autonomy.

This isn't a flaw unique to OpenClaw — it's the fundamental tension of agentic AI. Any software that can "do things" on your behalf can also do the wrong things. But OpenClaw's open, permissive design amplifies the surface area. If you deploy it, treat security configuration as step one, not an afterthought:

- Scope permissions to what's actually needed

- Run as a non-privileged user

- Vet every skill before installation

- Keep sensitive operations behind human approval

- Use a dedicated directory for agent file operations

Where agents are actually heading

OpenClaw is one data point in a much larger shift. The AI agent market was valued at $7.84 billion in 2025 and is projected to reach $52.62 billion by 2030 — a 46.3% compound annual growth rate. That's not hype-driven speculation; it tracks with what's actually shipping.

Google's AI Business Trends Report describes a near-term future where "a three-person team can launch a global campaign in days" with AI agents as digital coworkers. Microsoft's 2026 outlook highlights agents joining scientific discovery — not just summarizing papers but generating hypotheses and controlling experiments. The Goldman Sachs view adds personal agents and "mega alliances" between AI providers to the forecast.

Three trends stand out for what's next:

From chatbots to coworkers. The agent paradigm shift isn't about better chat interfaces. It's about software that maintains state, executes multi-step workflows, and improves over time. OpenClaw's heartbeat scheduler — waking up periodically to check for tasks — is closer to a cron job with judgment than a conversation.

Smaller, specialized models at the edge. The industry is validating that domain-optimized models running on edge devices and embedded systems can match larger general-purpose models for specific tasks. This means agents won't always need cloud API calls, reducing latency and cost while improving privacy.

Governance becomes non-negotiable. As agents gain autonomy, the governance conversation shifts from "should we use AI" to "how do we prevent AI agents from acting outside their mandate." The human-in-the-loop pattern — where critical decisions route to a person — is emerging as the standard, not the exception.

What OpenClaw tells us about open source AI

OpenClaw's velocity reveals something about where developer energy is flowing. The project went from zero to 175,000 stars faster than almost anything in GitHub history, spawning an ecosystem of alternatives (Nanobot achieved similar functionality in 4,000 lines of Python), community extensions, and enterprise scrutiny — all in weeks.

This pattern — explosive growth, rapid ecosystem formation, immediate security concerns — will repeat. Every new category of AI tooling will see an open-source project break out, attract a massive community, and force conversations about safety that the original creators didn't anticipate. OpenClaw's Trend Micro wake-up call won't be the last.

The takeaway isn't to avoid OpenClaw or agents in general. It's that the agent era rewards people who engage with the tooling early, understand both the capabilities and the risks, and build habits around safe deployment. OpenClaw, with all its rough edges, is the most accessible on-ramp to that future available today.

Install it on a weekend. Connect it to Telegram. Give it a simple recurring task. Watch how it reasons, where it stumbles, what it does well. That hands-on intuition for how agents work will be worth more than any number of prediction reports.

Want more insights?

Subscribe to get the latest articles delivered straight to your inbox.