AI adoption doesn't stall on the technology

When enterprise AI projects fail, the blockers are almost never the model. They're the platform, the operating model, the governance, and the economic assumptions the project was built on. What forward deployed engineers actually see.

The technology isn't the problem.

When organisations struggle to get value from AI — and most of them do, despite significant investment — the blockers are almost never about whether the model can do the task.

They're about everything that surrounds the model: the platform it sits on, the processes around it, the governance required to approve it, and the operating model changes it demands.

This week's reading, viewed through that lens, offers a clear picture of where enterprise AI adoption actually fails — and what it takes to fix it.

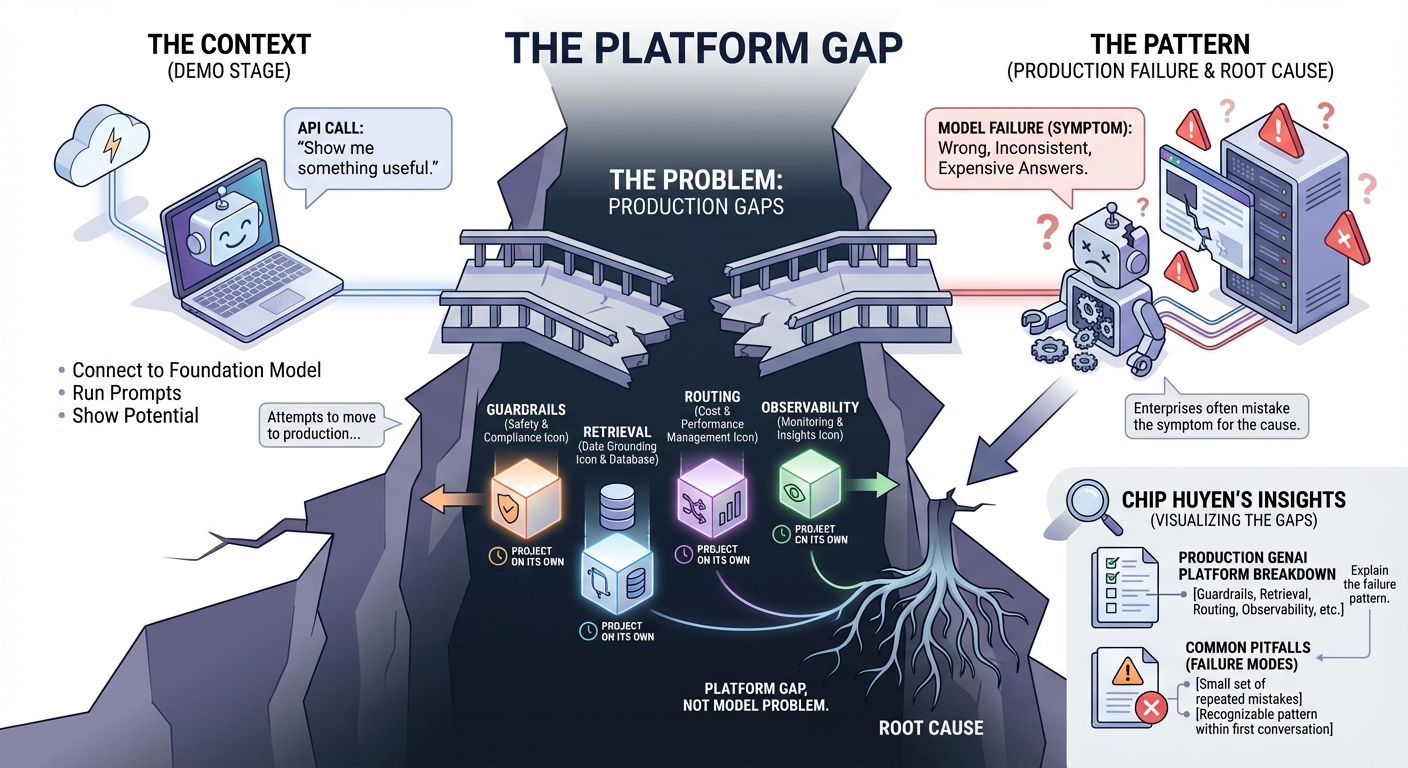

1. The platform gap

The context: Most AI adoption projects start with an API call. A team connects to a foundation model, runs some prompts, and shows it can do something useful. Then they try to take it to production.

The problem: That's when the gaps appear. What production actually requires — guardrails, retrieval to ground the model in real data, routing to manage costs, observability to understand what's happening — isn't in the demo. Each of those layers is a project on its own.

The pattern: When an enterprise AI deployment fails in production, it usually looks like a model failure. Wrong answers. Inconsistent answers. Expensive answers. But the root cause is almost always a platform gap, not a model problem.

Chip Huyen's breakdown of what a production GenAI platform requires makes these gaps visible. Her piece on common pitfalls names the failure modes — which is useful precisely because the same small set of mistakes shows up again and again. An FDE looking at a struggling AI deployment usually recognises the pattern within the first conversation.

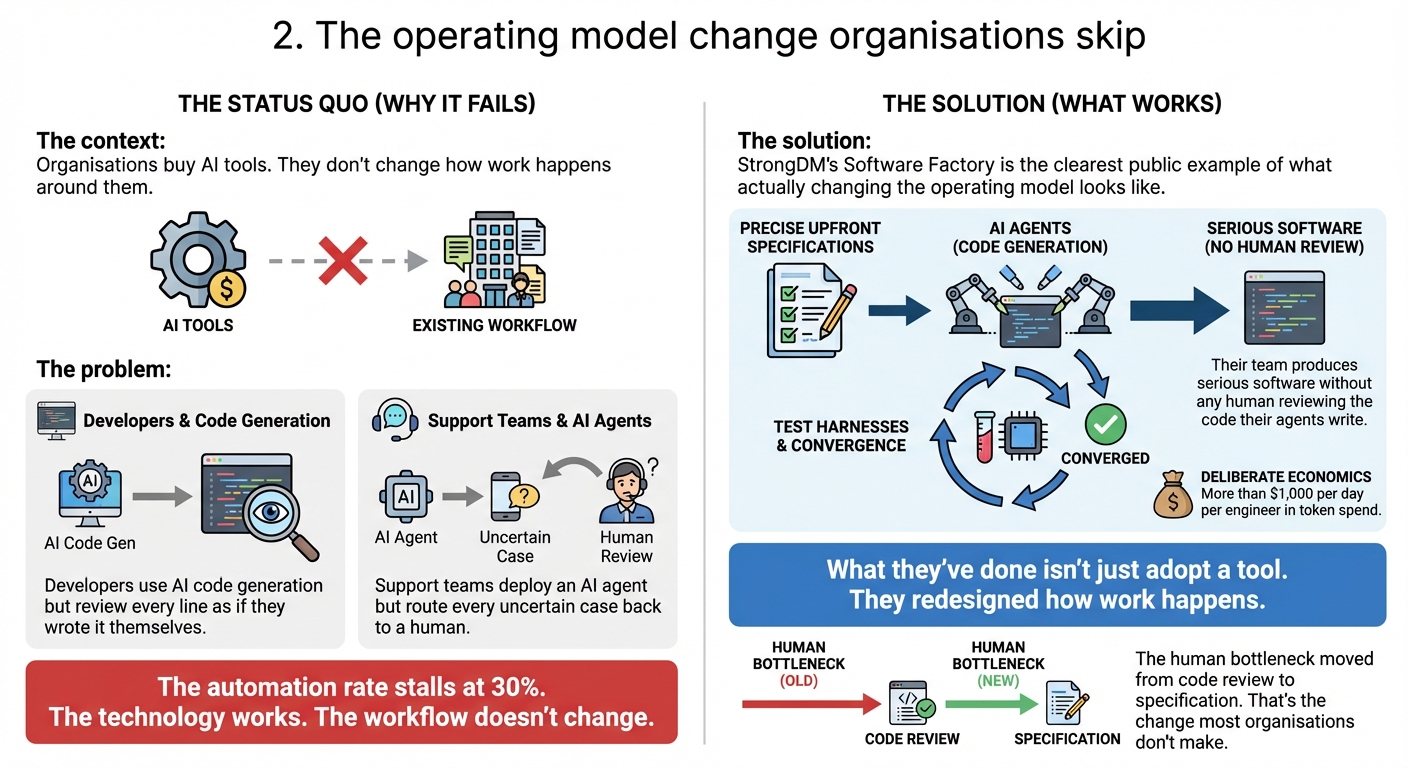

2. The operating model change organisations skip

The context: Organisations buy AI tools. They don't change how work happens around them.

The problem: This is why the time savings don't materialise. Developers use AI code generation but review every line as if they wrote it themselves. Support teams deploy an AI agent but route every uncertain case back to a human. The automation rate stalls at 30%. The technology works. The workflow doesn't change.

The solution: StrongDM's Software Factory is the clearest public example of what actually changing the operating model looks like.

Their team produces serious software without any human reviewing the code their agents write. Precise upfront specifications substitute for after-the-fact review. Agents run against test harnesses until they converge. The economics are deliberate — more than $1,000 per day per engineer in token spend.

What they've done isn't just adopt a tool. They redesigned how work happens. The human bottleneck moved from code review to specification. That's the change most organisations don't make.

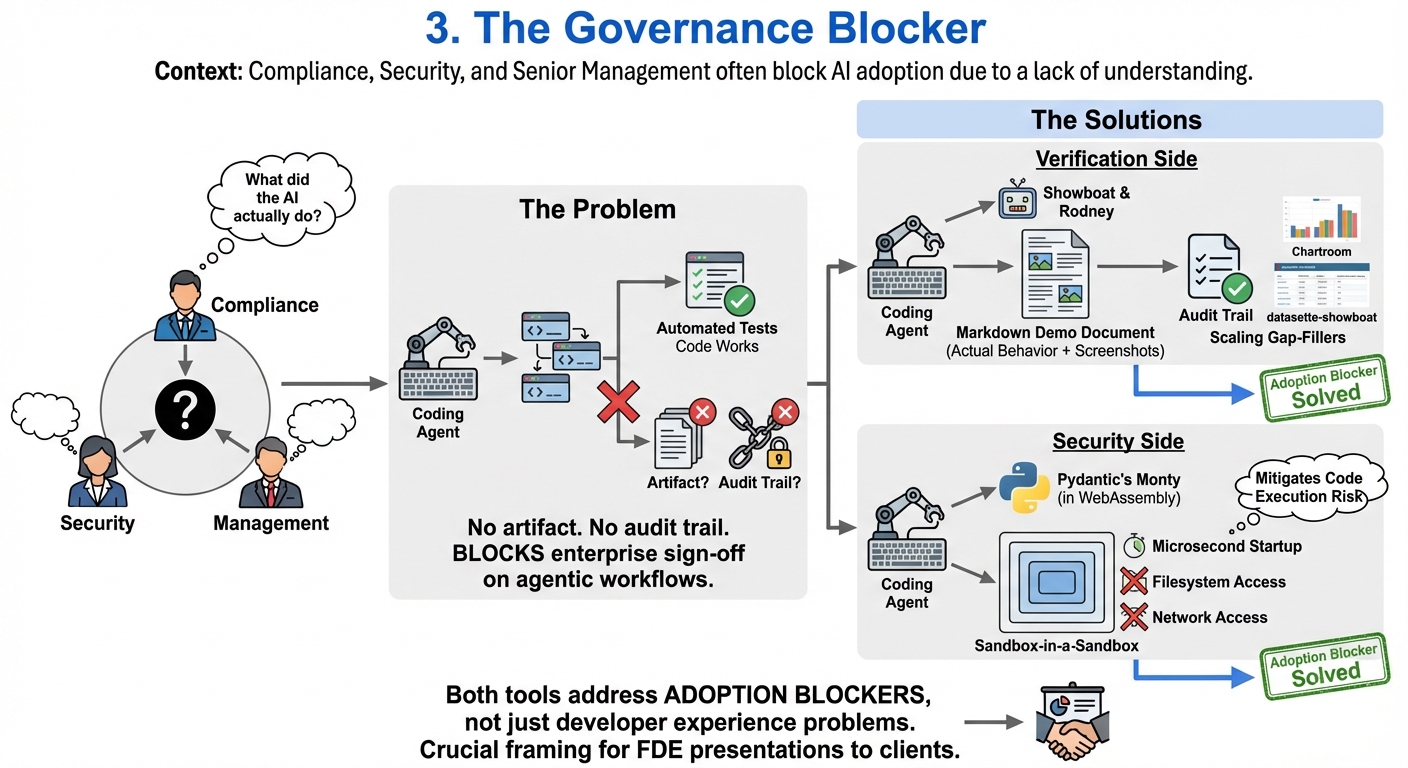

3. The governance blocker

The context: Compliance teams, security teams, and senior management often block AI adoption — not because they're opposed to AI, but because they can't answer a basic question: what did the AI actually do?

The problem: When a coding agent produces software, automated tests tell you whether the code works. They don't tell a compliance officer or a CTO what the agent built. There's no artifact. No audit trail. That's why many enterprises can't sign off on agentic workflows, regardless of how well the technology performs.

The solution — on the verification side: Simon Willison's Showboat is a tool that lets coding agents produce Markdown demo documents as they work — showing actual behavior, with screenshots captured via its companion tool Rodney. It's building the audit trail that governance requires. The Chartroom and datasette-showboat extensions fill gaps teams hit as they adopt the pattern at scale.

The solution — on the security side: The other reason security teams block AI coding agents is code execution risk. Agents running code they wrote, with filesystem and network access, is a real attack surface. Pydantic's Monty, which Willison got running in WebAssembly, creates a sandbox-in-a-sandbox — microsecond startup times, no filesystem or network access. Still experimental, but pointing at the right answer.

Both of these tools are solving adoption blockers, not developer experience problems. That framing matters when an FDE presents them to a client.

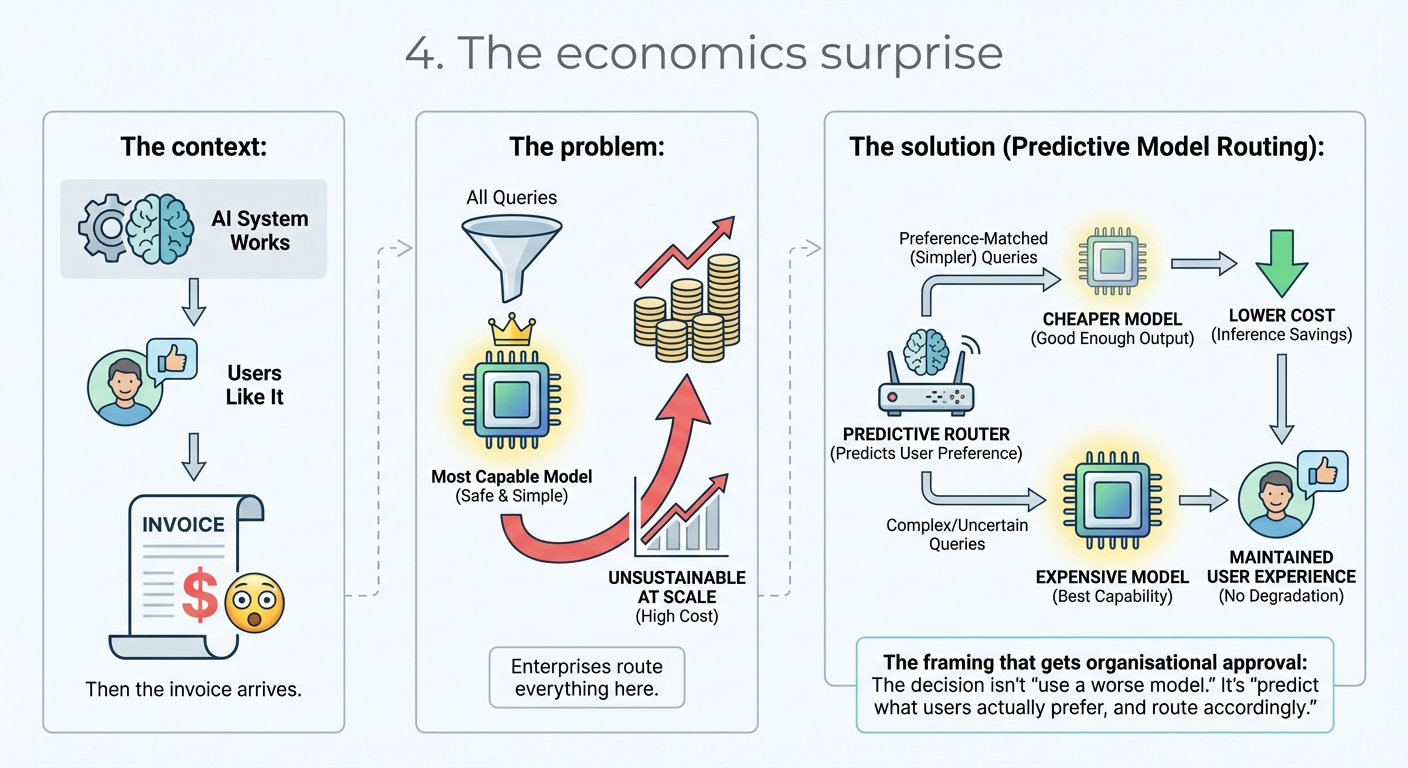

4. The economics surprise

The context: The AI system works. Users like it. Then the invoice arrives.

The problem: Enterprises almost always start with one model — usually the most capable one — and route everything there. Safe. Simple. Economically unsustainable at scale.

The solution: Chip Huyen's work on predictive model routing makes the case clearly. For a large fraction of query types, a cheaper model produces output that users prefer just as much as the expensive one. Routing by predicted user preference — rather than always routing to "the best model" — cuts inference costs without degrading experience.

The framing that gets organisational approval: the decision isn't "use a worse model." It's "predict what users actually prefer, and route accordingly."

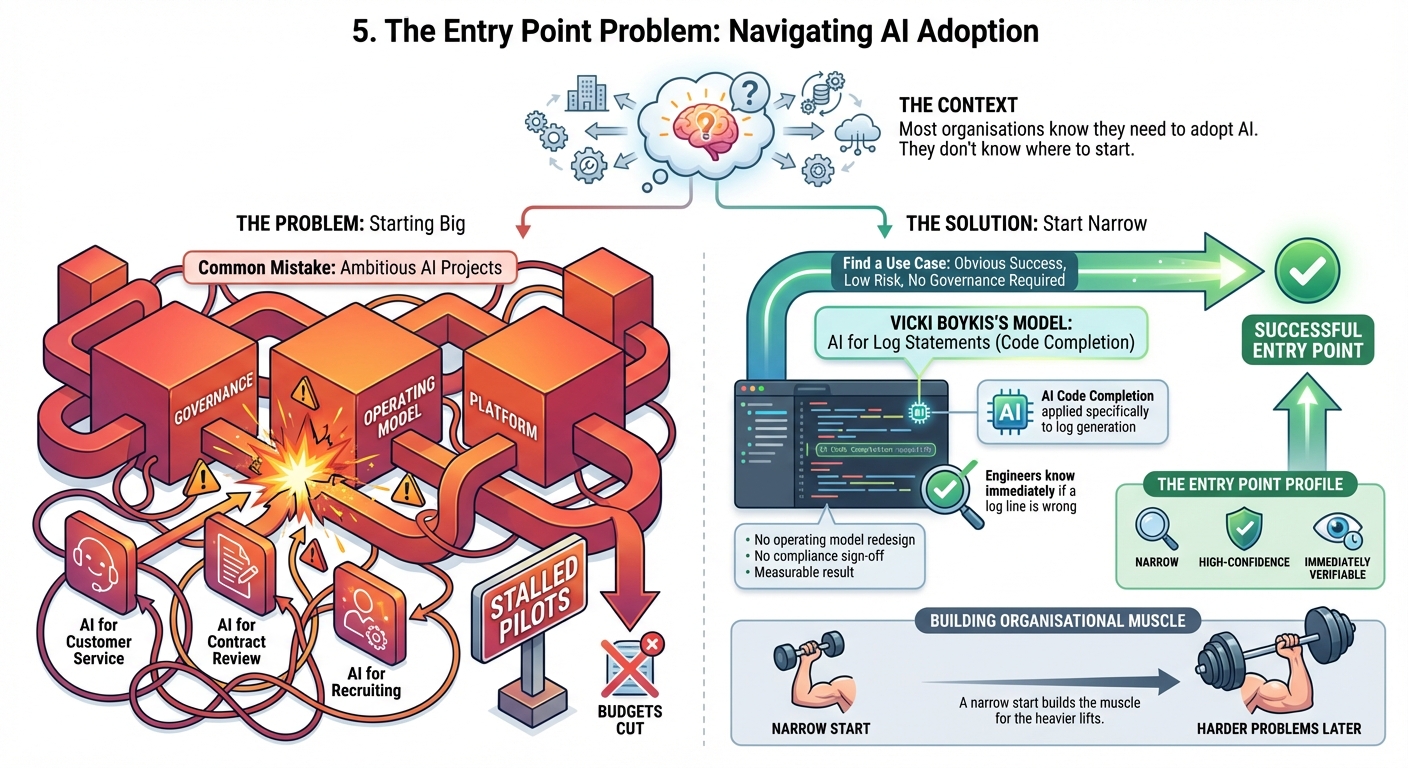

5. The entry point problem

The context: Most organisations know they need to adopt AI. They don't know where to start.

The problem: The common mistake is to start big — AI for customer service, AI for contract review, AI for recruiting. These hit the governance, operating model, and platform challenges all at once. Pilots stall. Budgets get cut.

The solution: Start narrow. Find a use case where success is obvious, risk is low, and no governance approval is required.

Vicki Boykis's case for using AI to write log statements is the right model. AI code completion applied specifically to log generation — not general code authorship — saves real time on a tedious task. Engineers know immediately if a log line is wrong. No operating model redesign. No compliance sign-off. Measurable result. That's the entry point profile: narrow, high-confidence, immediately verifiable.

A narrow start builds the organisational muscle for the harder problems later.

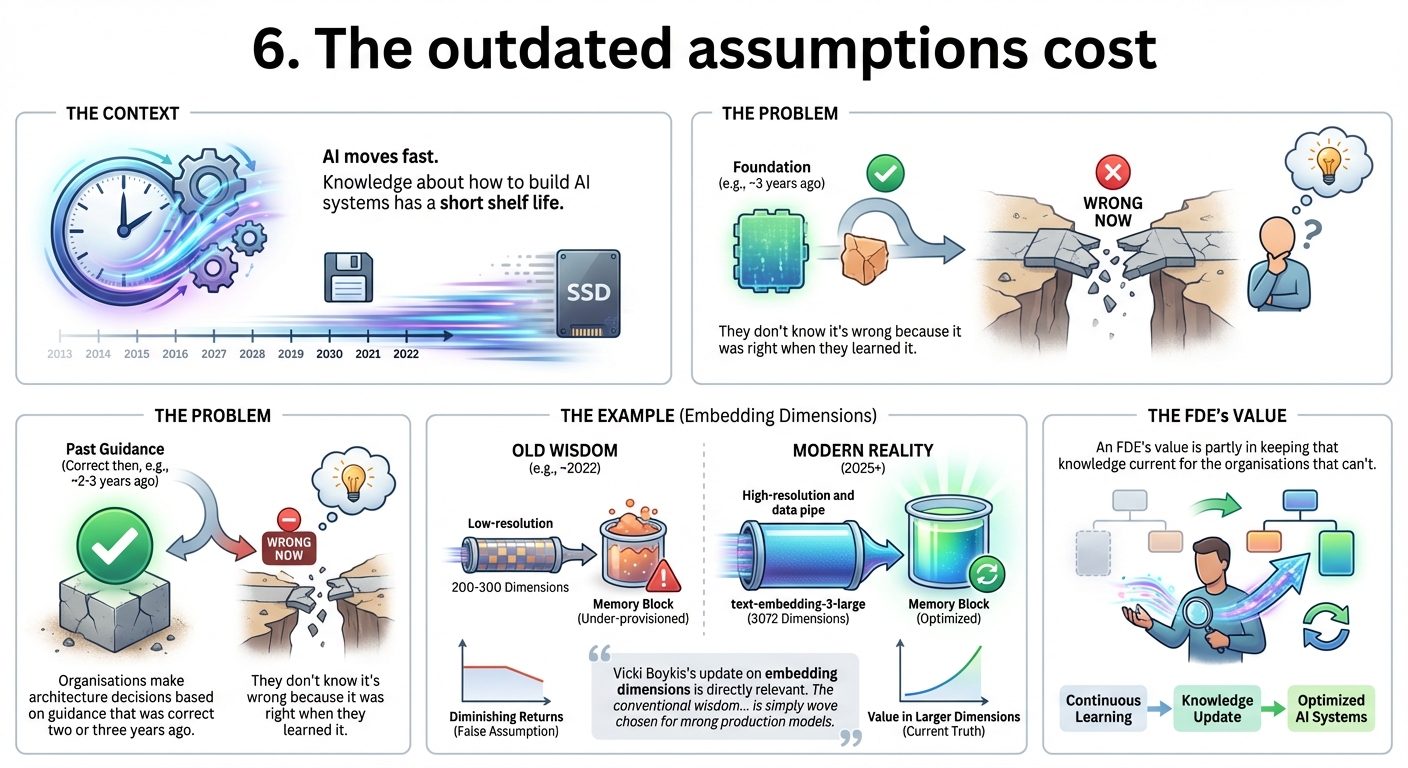

6. The outdated assumptions cost

The context: AI moves fast. Knowledge about how to build AI systems has a short shelf life.

The problem: Organisations make architecture decisions based on guidance that was correct two or three years ago. They don't know it's wrong because it was right when they learned it.

The example: Vicki Boykis's update on embedding dimensions is directly relevant. The conventional wisdom a few years ago — 200-300 dimensions, diminishing returns beyond that — is simply wrong for modern production models. text-embedding-3-large ships at 3072 dimensions. Teams that built retrieval architectures around the old numbers are under-provisioning memory and may have chosen the wrong models entirely.

An FDE's value is partly in keeping that knowledge current for the organisations that can't.

The question that matters

Simon Willison building a custom macOS app overnight for a conference talk shows how far individual AI-assisted productivity has moved. And Vicki Boykis implementing map-reduce vector search from first principles on 3 billion vectors is a reminder that the infrastructure beneath enterprise AI is scaling in ways that need deliberate attention.

But neither of those is the hard part for most organisations.

The hard part is this: the question is never "can AI do this?" The question is always "what does our operating model, governance, and economic model need to look like for AI to do this reliably at scale?"

Those are organisational design questions. The technology is usually the easier part.

Sources this week: Chip Huyen Blog, Simon Willison's Weblog, Vicki Boykis Blog

Want more insights?

Subscribe to get the latest articles delivered straight to your inbox.