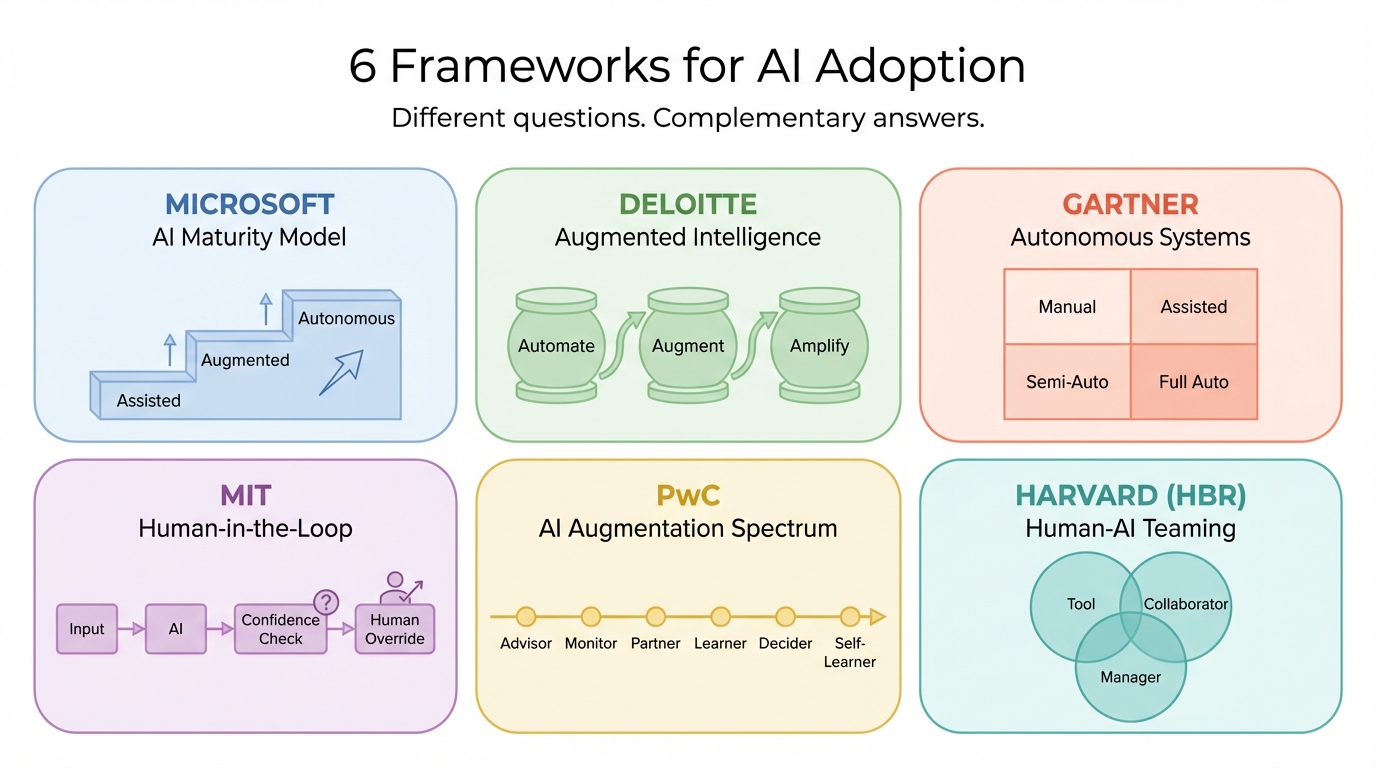

6 AI frameworks that actually tell you how to work with AI (not just talk about it)

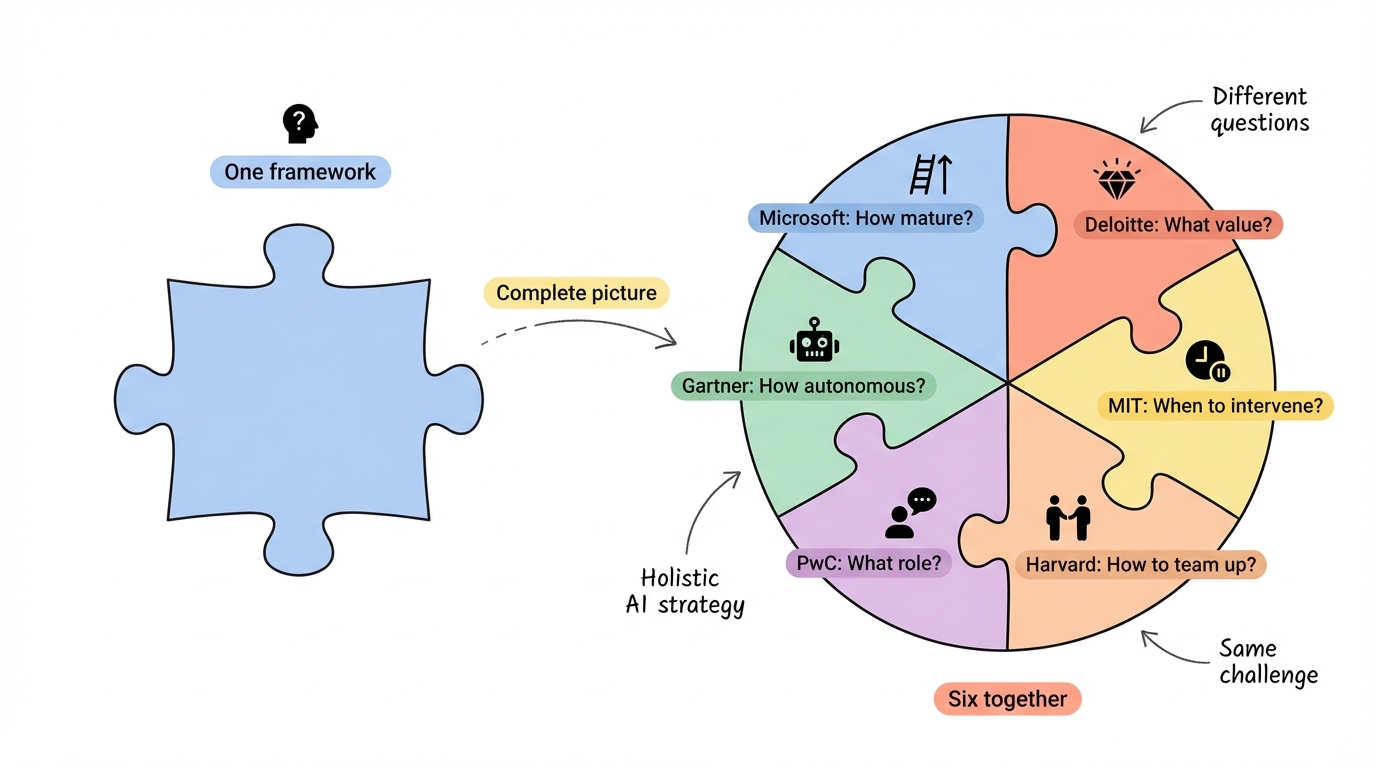

Six frameworks from Microsoft, Deloitte, Gartner, MIT, PwC, and Harvard Business Review all address how organizations should actually work with AI. We traced each one back to its original source.

Every organization is asking the same question right now: we know AI matters, but how do we actually work with it?

The advice out there isn't helping. It's either too vague — "just adopt AI!" — or too technical —

"fine-tune your transformer architecture." Neither tells a business leader what to actually do on Monday morning.

Six major organizations — Microsoft, Deloitte, Gartner, MIT, PwC, and Harvard Business Review — have each published frameworks that answer this question from different angles. We dug into the original sources to understand what each framework actually says, where it comes from, and how they work together to give you a complete picture.

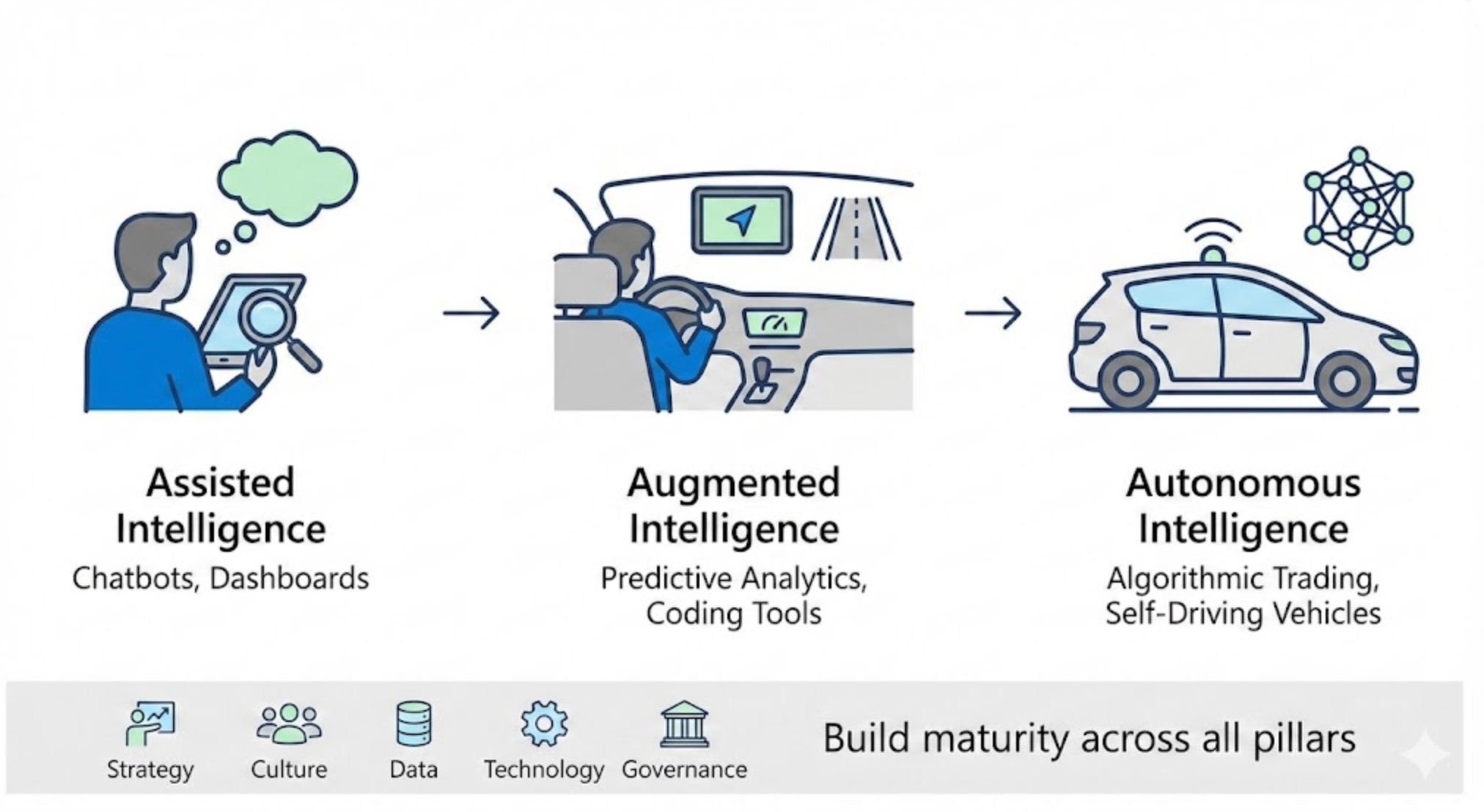

Microsoft's AI maturity model: where are you on the journey?

Microsoft frames AI adoption as a progression through three stages: Assisted Intelligence, Augmented Intelligence, and Autonomous Intelligence. Think of it like learning to drive. First, you get GPS directions (assisted). Then, lane-keeping and adaptive cruise control help you drive better (augmented). Eventually, the car drives itself (autonomous).

Assisted Intelligence is where most companies start. AI gives you data, charts, and recommendations, but a human makes every call. Think chatbots that answer FAQs or dashboards that flag anomalies. The AI is a research assistant, not a decision-maker.

Augmented Intelligence is the middle ground where AI starts actively shaping outcomes. It's not just showing you data; it's suggesting what to do with it. Predictive analytics, AI-assisted coding tools, smart document processing. You're still in the driver's seat, but AI is making you a better driver.

Autonomous Intelligence is the endgame: AI makes decisions and takes action independently. Algorithmic trading, autonomous supply chain management, self-driving vehicles. Most organizations aren't here yet, and for good reason. It requires deep trust in your AI systems and robust governance.

Here's what Microsoft's official Cloud Adoption Framework actually emphasizes: don't try to jump to autonomous. Build maturity across strategy, culture, data, technology, and governance simultaneously. The organizations that skip steps are the ones that end up with expensive AI projects that nobody trusts.

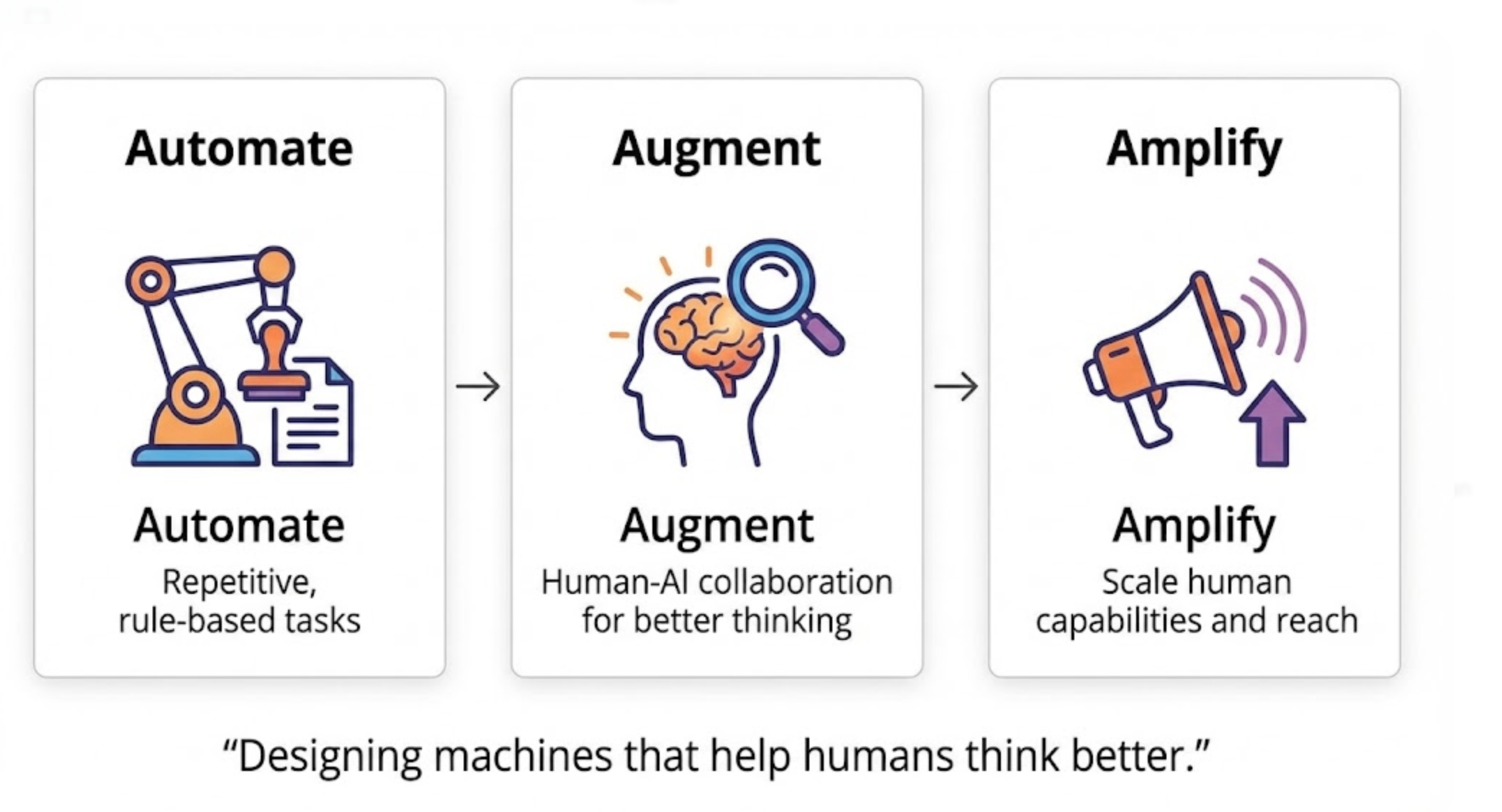

Deloitte's augmented intelligence framework: automate, augment, amplify

Deloitte takes a different angle. Instead of asking "how mature are we?", they ask: "what kind of value should AI create?"

Their framework breaks AI's contribution into three buckets:

Automate is the most straightforward. AI takes over repetitive, rule-based tasks. Invoice processing, data entry, standard customer queries. The work still needs to get done, but a human doesn't need to do it anymore. This frees people up for work that actually requires judgment.

Augment is where it gets interesting. AI doesn't replace the human; it makes the human better. A doctor using AI-powered diagnostic tools still makes the diagnosis, but with access to pattern recognition across millions of cases. A financial analyst using AI still writes the recommendation, but informed by real-time market analysis no human could process alone.

Amplify is about scale. AI helps humans do things they simply couldn't do before, not because they lacked the skill, but because they lacked the bandwidth. Personalized marketing at the individual level. Real-time fraud detection across millions of transactions. Product recommendations that update in milliseconds.

The insight from Deloitte's Chief Data Scientist Jim Guszcza is worth remembering: "The goal is not building machines that think like humans, but designing machines that help humans think better." That philosophy, originally articulated in Deloitte's Cognitive Collaboration research, underpins everything they recommend about AI strategy.

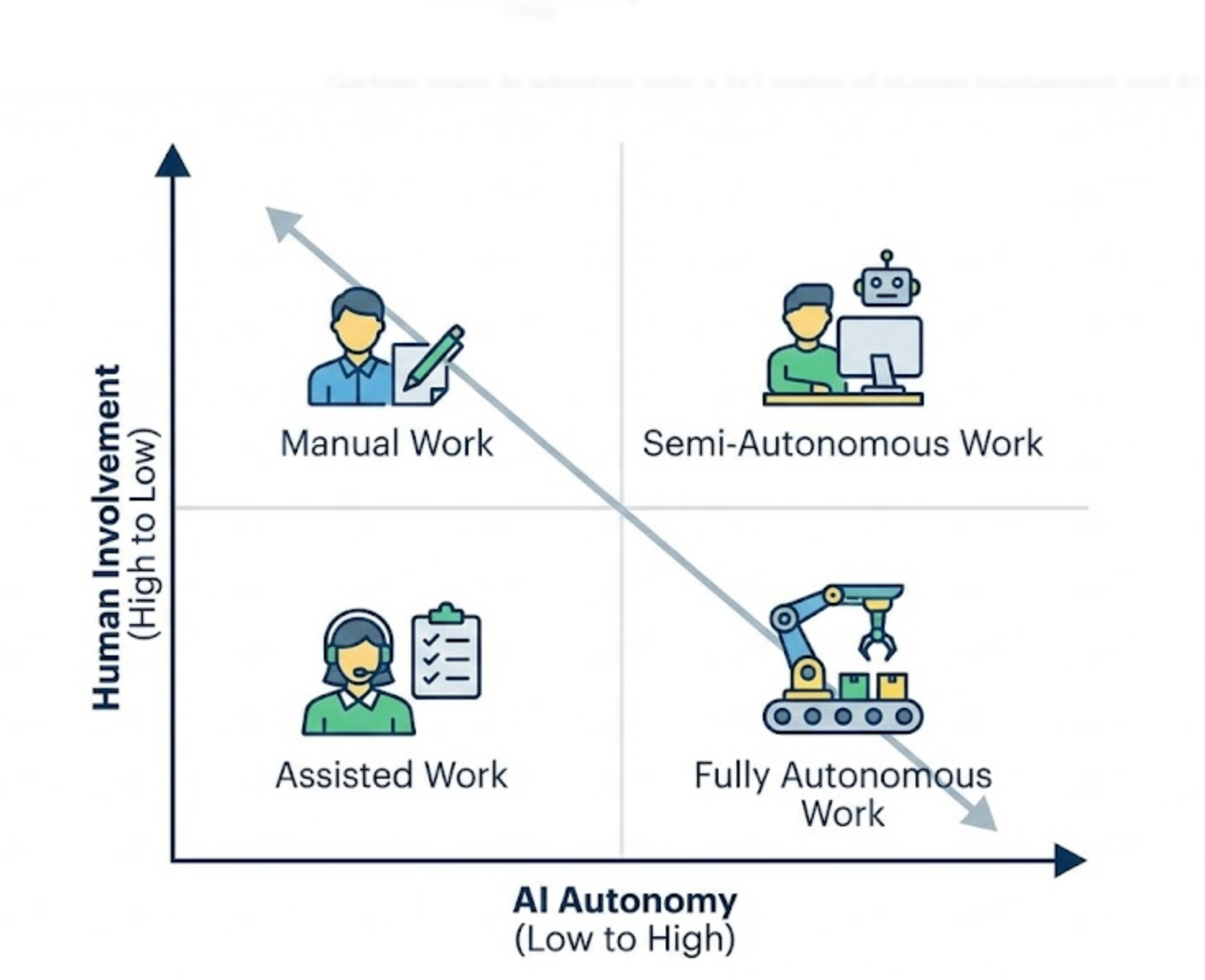

Gartner's autonomous systems framework: the human-AI control spectrum

Gartner maps AI adoption onto a 2x2 matrix with two axes: Human Involvement (high to low) and AI Autonomy (low to high). This creates four quadrants:

Manual Work sits in the corner where humans do everything and AI does nothing. Traditional processes, pen-and-paper workflows, purely human decision-making. This is your starting point.

Assisted Work is high human involvement with low AI autonomy. Humans do the work, but AI helps. Think of a warehouse worker using an AI-powered picking system that suggests the fastest route, or a customer service rep with AI-suggested responses. The human is still driving.

Semi-Autonomous Work flips the balance. AI handles most of the task, but humans intervene when needed. An AI system that processes loan applications but flags edge cases for human review. A content moderation system that auto-removes clear violations but escalates ambiguous posts. The AI is driving, but a human has their hand on the wheel.

Fully Autonomous Work is the top corner: AI performs tasks independently with no human input. Robotic process automation in back-office operations, autonomous quality inspection on manufacturing lines, algorithmic trading systems. The AI drives, and the human isn't even in the car.

What makes Gartner's framing useful is that it's not a ladder. You don't need to move every process to fully autonomous. Some work should stay manual. Some should stay assisted. The framework helps you make that choice deliberately rather than defaulting to "automate everything."

MIT's human-in-the-loop model: keeping humans where it matters

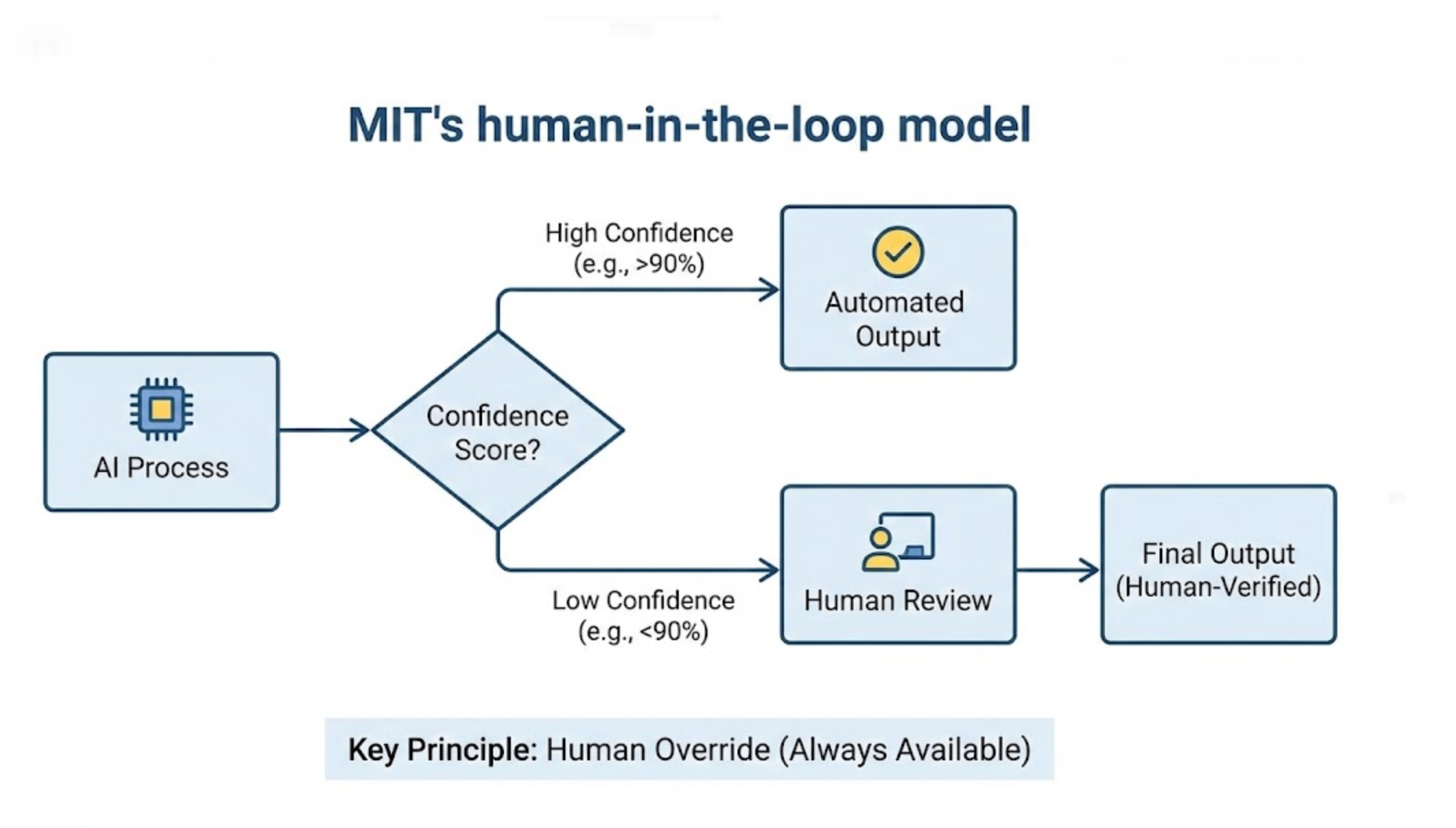

MIT's contribution is less about adoption stages and more about a fundamental design principle: how do you keep humans involved in AI systems, and when should they step in?

The Human-in-the-Loop (HITL) model works on a confidence-based system. When AI processes an input, it produces both an output and a confidence score. When confidence is high, the output goes straight through. When confidence drops below a threshold, the system routes to a human for review.

Here's a simple example. An AI reads medical images and flags potential tumors. For straightforward cases where the AI is 98% confident, the result goes directly to the patient's file. For ambiguous cases at 75% confidence, a radiologist reviews the scan. For anything flagged as unusual, a human makes the final call.

The key design choice is the human override: regardless of confidence level, a human can always step in and correct the AI's output. This is especially important for sensitive decisions in healthcare, finance, criminal justice, or any domain where errors have serious consequences.

What MIT's approach gets right is that it doesn't frame human involvement as a limitation. It's a feature. Humans bring contextual understanding, ethical judgment, and common sense that AI systems still lack. The framework's job is to put humans exactly where they add the most value: on the hard, ambiguous, high-stakes decisions where AI falls short.

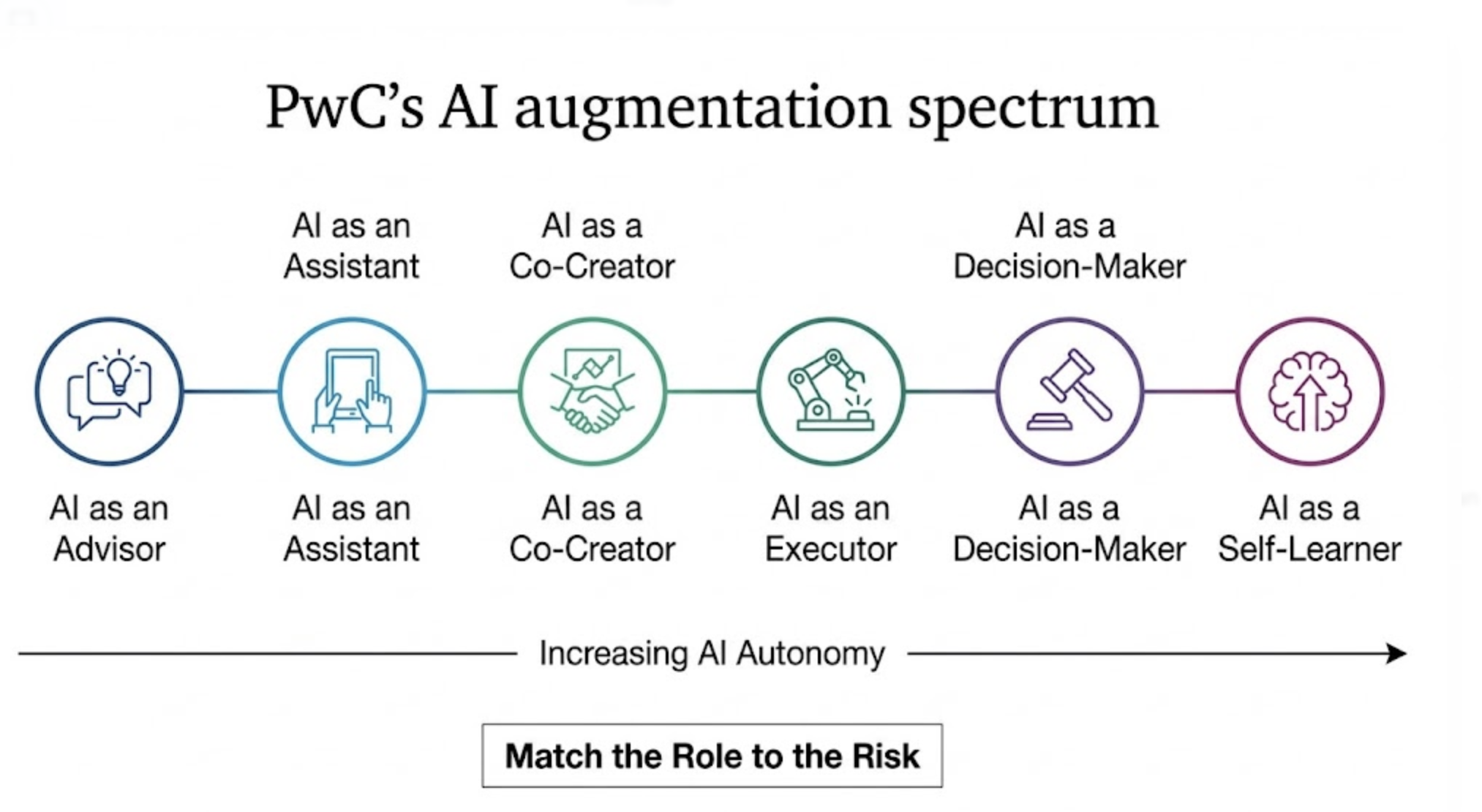

PwC's AI augmentation spectrum: six roles AI can play

PwC's framework is the most granular of the six. Rather than stages or quadrants, they define six specific roles that AI can play relative to humans, arranged along a spectrum of increasing autonomy:

AI as an Advisor provides insights and recommendations, but every decision stays with the human. Think market research tools, trend analysis dashboards, or risk scoring systems. The AI says "here's what I see." The human says "here's what we'll do."

AI as an Assistant actively helps humans complete tasks more efficiently. Email drafting, meeting scheduling, code completion. The AI handles the routine parts so you can focus on the parts that need your judgment.

AI as a Co-Creator works collaboratively with humans on complex tasks. Design tools that generate options for a designer to refine. Writing assistants that draft content a human shapes and polishes. The output is a genuine collaboration.

AI as an Executor performs tasks with minimal human input. Automated report generation, data pipeline management, routine customer interactions. You set the parameters; AI does the work.

AI as a Decision-Maker makes decisions independently within defined boundaries. Pricing optimization, ad placement, inventory management. The AI has authority to act, but within guardrails humans have set.

AI as a Self-Learner continuously improves its own performance through feedback loops and adaptation. Recommendation engines that get better with use, fraud detection systems that evolve with new patterns.

PwC's spectrum is useful because it gives you a vocabulary for different conversations. Your marketing team might need AI as a Co-Creator. Your finance team might need AI as an Advisor. Your operations team might need AI as an Executor. Same technology, different roles. PwC's broader AI practice emphasizes matching the role to the risk: the higher the stakes, the more human involvement you want.

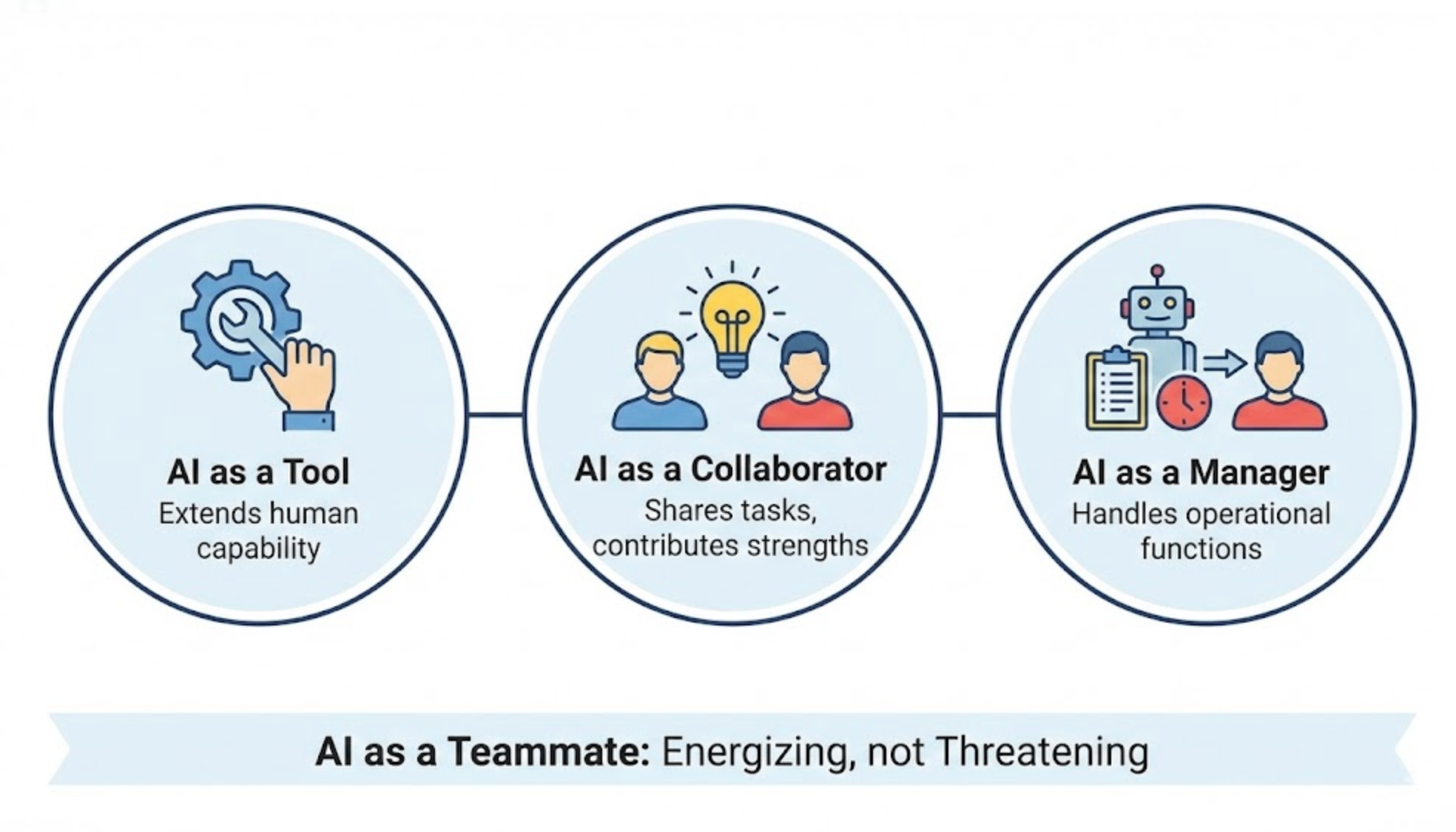

Harvard Business Review's human-AI teaming model: AI on the team

Harvard Business Review frames AI not as a tool to be deployed, but as a teammate to be integrated. Their model defines three modes of human-AI teaming:

AI as a Tool is the most familiar mode. AI provides data-driven insights that inform human decisions. Analytics dashboards, search engines, recommendation systems. The human uses AI the way you use a calculator: it extends your capability, but you're doing the work.

AI as a Collaborator is where things shift. AI and humans share tasks, each contributing their strengths. Harvard Business School research backs this up with hard numbers: in a field experiment at Procter & Gamble with 791 professionals, ideas ranking in the top 10% were three times more likely to come from teams using AI than from individuals working alone. Even more striking, individual workers paired with AI matched the output quality of two-person human teams without AI.

AI as a Manager handles specific organizational functions: scheduling, performance monitoring, workflow optimization. Think of AI systems that allocate resources, route customer tickets, or balance workloads across teams. The AI isn't making strategic decisions, but it's making operational ones that keep everything running smoothly.

The Harvard research revealed something unexpected about the emotional side of AI collaboration. Employees using AI reported higher enthusiasm and energy, and less anxiety, than those working alone. As a teammate, AI didn't feel threatening. It felt energizing. The researchers also found that AI broke down functional silos: teams produced ideas that mixed technical and commercial elements equally, regardless of their departmental background.

How these frameworks fit together

Here's what matters: these six frameworks aren't competitors. They answer different questions about the same challenge.

"How mature is our organization?" Ask Microsoft. Their progression from assisted to autonomous helps you benchmark where you are and plan where you're heading.

"What value should AI create?" Ask Deloitte. Their automate-augment-amplify split helps you prioritize which processes to tackle first.

"How much control should we hand over?" Ask Gartner. Their autonomy spectrum helps you decide which processes need human involvement and which don't.

"When should humans step in?" Ask MIT. Their confidence-based routing ensures humans are involved exactly where they're needed most.

"What role should AI play?" Ask PwC. Their six roles give you the vocabulary to have specific conversations about AI's job description.

"How do humans and AI work together?" Ask Harvard. Their teaming model helps you think about AI as a participant in your team, not just a tool on your shelf.

The organizations getting AI right aren't picking one framework. They're using Microsoft's maturity model to assess readiness, Deloitte's to prioritize use cases, Gartner's to set autonomy levels, MIT's to design safeguards, PwC's to define roles, and Harvard's to build team dynamics. That layered approach is what turns AI from an expensive experiment into an actual competitive advantage.

Start with where you are, not where you want to be. Pick two or three frameworks that match your most pressing questions. And remember: every one of these frameworks puts humans at the center. The goal was never to replace people. It was always to help them do better work.

Want more insights?

Subscribe to get the latest articles delivered straight to your inbox.